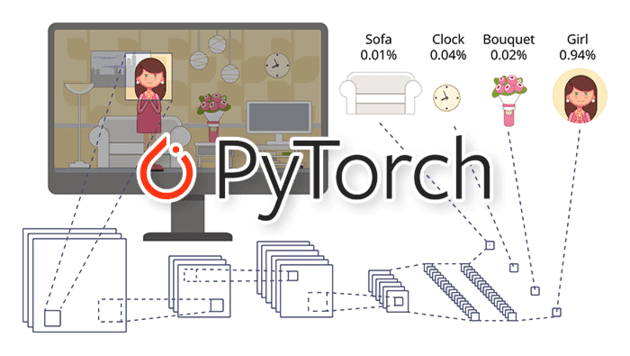

In this tutorial, you will learn how to perform object detection with pre-trained networks using PyTorch. Utilizing pre-trained object detection networks, you can detect and recognize 90 common objects that your computer vision application will “see” in everyday life. Today’s…

PyTorch object detection with pre-trained networks

Read More of PyTorch object detection with pre-trained networks