In my last post, I mentioned that tiny, one pixel shifts in images can kill the performance your Restricted Boltzmann Machine + Classifier pipeline when utilizing raw pixels as feature vectors.

Today I am going to continue that discussion.

And more importantly, I’m going to provide some Python and scikit-learn code that you can use to apply Restricted Boltzmann Machines to your own image classification problems.

OpenCV and Python versions:

This example will run on Python 2.7 and OpenCV 2.4.X/OpenCV 3.0+.

But before we jump into the code, let’s take a minute to talk about the MNIST dataset.

The MNIST Dataset

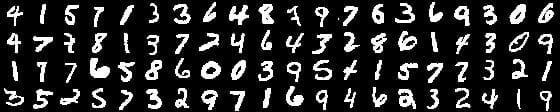

The MNIST dataset is one of the most well studied datasets in the computer vision and machine learning literature. In many cases, it’s a benchmark, a standard to which some machine learning algorithms are ranked against.

The goal of this dataset is to correctly classify the handwritten digits 0-9. We are not going to utilize the entire dataset (which consists of 60,000 training images and 10,000 testing images), instead we are going to utilize a small sample (3,000 for training, 2,000 for testing). The data points are approximately uniformly distributed per digit, so no substantial class label imbalance exists.

Each feature vector is 784-dim, corresponding to the 28 x 28 grayscale pixel intensities of the image. These grayscale pixel intensities are unsigned integers, falling into the range [0, 255].

All digits are placed on a black background, with the foreground being white and shades of gray.

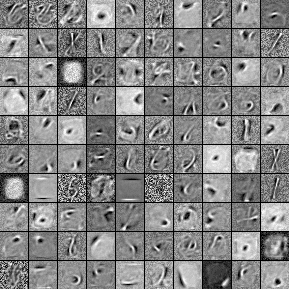

Given these raw pixel intensities, we are going to first train a Restricted Boltzmann Machine on our training data to learn an unsupervised feature representation of the digits.

Then, we are going to take these “learned” features and train a Logistic Regression classifier on top of them.

To evaluate our pipeline, we’ll take the testing data and run it through our classifier and report the accuracy.

However, I mentioned in my previous post that simple one pixel translations of the testing set images can lead to accuracy dropping, even though these translations are so small they are barely (if at all) noticeable to the human eye.

To test this claim, we’ll generate a testing set four times larger than the original by translating each image one pixel up, down, left, and right.

Finally, we’ll pass this “nudged” dataset through our pipeline and report our results.

Sound good?

Let’s examine some code.

Applying a RBM to the MNIST Dataset Using Python

The first thing we’ll do is create a file, rbm.py, and start importing the packages we need:

# import the necessary packages from sklearn.cross_validation import train_test_split from sklearn.metrics import classification_report from sklearn.linear_model import LogisticRegression from sklearn.neural_network import BernoulliRBM from sklearn.grid_search import GridSearchCV from sklearn.pipeline import Pipeline import numpy as np import argparse import time import cv2

We’ll start by importing the train_test_split function from the cross_validation sub-package of scikit-learn. The train_test_split function will make it dead simple for us to create our training and testing splits of the MNIST dataset.

Next, we’ll import the classification_report function from the metrics sub-package, which we’ll use to produce a nicely formatted accuracy report on (1) the overall system and (2) the accuracy of each individual class label.

On Line 4 we’ll import the classifier we’ll be using throughout this example — a LogisticRegression classifier.

I mentioned that we’ll be using a Restricted Boltzmann Machine to learn an unsupervised representation of our raw pixel values. This will be handled by the BernoulliRBM class in the neural_network sub-package of scikit-learn.

The BernoulliRBM implementation (as the name suggests), consists of binary visible units and binary hidden nodes. The algorithm itself is O(d2), where d is the number of components to be learned.

In order to find optimal values of the coefficient C for Logistic Regression, along with the optimal learning rate, number of iterations, and number of components for our RBM, we’ll need to perform a cross-validated grid search over the feature space. The GridSearchCV class (which we import on Line 6) will take care of this search for us.

Next, we’ll need the Pipeline class, imported on Line 7. This class allows us to define a series of steps using the fit and transform methods of scikit-learn estimators.

Our classification pipeline will consist of first training a BernoulliRBM to learn an unsupervised representation of the feature space, followed by training a LogisticRegression classifier on the learned features.

Finally, we import NumPy for numerical processing, argparse to parse command line arguments, time to track the amount of time it takes for a given model to train, and cv2 for our OpenCV bindings.

But before we get too far, we first need to setup some functions to load and manipulate our MNIST dataset:

def load_digits(datasetPath): # build the dataset and then split it into data # and labels X = np.genfromtxt(datasetPath, delimiter = ",", dtype = "uint8") y = X[:, 0] X = X[:, 1:] # return a tuple of the data and targets return (X, y)

The load_digits function, as the name suggests, loads our MNIST digit dataset off disk. The function takes a single parameter, datasetPath, which is the path to where the dataset CSV file resides.

We load the CSV file off disk using the np.genfromtxt function, grab the class labels (which are the first column of the CSV file) on Line 17, followed by the actual raw pixel feature vectors on Line 18. These feature vectors are of 784-dim corresponding to the 28 x 28 flattened representation of the grayscale digit image.

Finally, we return a tuple of our feature vector matrix and class labels on Line 21.

Next up, we need a function to apply some pre-processing to our data.

The BernoulliRBM assumes that the columns of our feature vectors fall within the range [0, 1]. However, the MNIST dataset is represented as unsigned 8-bit integers, falling within the range [0, 255].

To scale the columns into the range [0, 1], all we need to do is define a scale function:

def scale(X, eps = 0.001): # scale the data points s.t the columns of the feature space # (i.e the predictors) are within the range [0, 1] return (X - np.min(X, axis = 0)) / (np.max(X, axis = 0) + eps)

The scale function takes two parameters, our data matrix X and an epsilon value used to prevent division by zero errors.

This function is fairly self-explanatory. For each of the 784 columns in the matrix, we subtract the value from the minimum of the column and divide by the maximum of the column. By doing this, we have ensured that the values of each column fall into the range [0, 1].

Now we need one last function: a method to generated a “nudged” dataset four times larger than the original, translating each image one pixel up, down, left, and right.

To handle this nudging of the dataset, we’ll create the nudge function:

def nudge(X, y): # initialize the translations to shift the image one pixel # up, down, left, and right, then initialize the new data # matrix and targets translations = [(0, -1), (0, 1), (-1, 0), (1, 0)] data = [] target = [] # loop over each of the digits for (image, label) in zip(X, y): # reshape the image from a feature vector of 784 raw # pixel intensities to a 28x28 'image' image = image.reshape(28, 28) # loop over the translations for (tX, tY) in translations: # translate the image M = np.float32([[1, 0, tX], [0, 1, tY]]) trans = cv2.warpAffine(image, M, (28, 28)) # update the list of data and target data.append(trans.flatten()) target.append(label) # return a tuple of the data matrix and targets return (np.array(data), np.array(target))

The nudge function takes two parameters: our data matrix X and our class labels y.

We start by initializing a list of our (x, y) translations, followed by our new data matrix and target labels on Lines 32-34.

Then, we start looping over each of the images and class labels on Line 37.

As I mentioned, each image is represented as a 784-dim feature vector, corresponding to the 28 x 28 digit image.

However, to utilize the cv2.warpAffine function to translate our image, we first need to reshape the 784 feature vector into a two dimensional array of shape (28, 28) — this is handled on Line 40.

Next up, we start looping over our translations on Line 43.

We construct our actual translation matrix M on Line 45 and then apply the translation by calling the cv2.warpAffine function on Line 46.

We are then able to update our new, nudged data matrix on Line 48 by flattening the 28 x 28 image back into a 784-dim feature vector.

Our class label target list is then updated on Line 50.

Finally, we return a tuple of the new data matrix and class labels on Line 53.

These three helper functions, while quite simple in nature, are critical to setting up our experiment.

Now we can finally start putting the pieces together:

# construct the argument parser and parse the arguments

ap = argparse.ArgumentParser()

ap.add_argument("-d", "--dataset", required = True,

help = "path to the dataset file")

ap.add_argument("-t", "--test", required = True, type = float,

help = "size of test split")

ap.add_argument("-s", "--search", type = int, default = 0,

help = "whether or not a grid search should be performed")

args = vars(ap.parse_args())

Lines 56-63 handle parsing our command line arguments. Our rbm.py script requires three arguments: --dataset, which is the path to where our MNIST .csv file resides on disk, --test, the percentage of data to use for our testing split (the rest used for training), and --search, an integer used to determine if a grid search should be performed to tune hyper-parameters.

A value of 1 for --search indicates that a grid search should be performed; a value of 0 indicates that the grid search has already been performed and the model parameters for both the BernoulliRBM and LogisticRegression models have already been manually set.

# load the digits dataset, convert the data points from integers

# to floats, and then scale the data s.t. the predictors (columns)

# are within the range [0, 1] -- this is a requirement of the

# Bernoulli RBM

(X, y) = load_digits(args["dataset"])

X = X.astype("float32")

X = scale(X)

# construct the training/testing split

(trainX, testX, trainY, testY) = train_test_split(X, y,

test_size = args["test"], random_state = 42)

Now that our command line arguments have been parsed, we can load our dataset off disk on Line 69. We then convert it to the floating point data type on Line 70 and scale the feature vector columns to fall into the range [0, 1] using our scale function on Line 71.

In order to evaluate our system we need two sets of data: a training set and a testing set. Our pipeline will be trained using the training data, and then evaluated using the testing set to ensure our accuracy reports are not biased.

To generate our training and testing splits, we’ll call the train_test_split function on Line 74. This function automatically generates our splits for us.

# check to see if a grid search should be done

if args["search"] == 1:

# perform a grid search on the 'C' parameter of Logistic

# Regression

print "SEARCHING LOGISTIC REGRESSION"

params = {"C": [1.0, 10.0, 100.0]}

start = time.time()

gs = GridSearchCV(LogisticRegression(), params, n_jobs = -1, verbose = 1)

gs.fit(trainX, trainY)

# print diagnostic information to the user and grab the

# best model

print "done in %0.3fs" % (time.time() - start)

print "best score: %0.3f" % (gs.best_score_)

print "LOGISTIC REGRESSION PARAMETERS"

bestParams = gs.best_estimator_.get_params()

# loop over the parameters and print each of them out

# so they can be manually set

for p in sorted(params.keys()):

print "\t %s: %f" % (p, bestParams[p])

A check is made on Line 78 to see if a grid search should be performed to tune the hyper-parameters of our pipeline.

If a grid search is to be performed, we first search over the coefficient C of the Logistic Regression classifier on Lines 81-85. We’ll be evaluating our approach using just a Logistic Regression classifier on the raw pixel data AND a Restricted Boltzmann Machine + Logistic Regression classifier, hence we need to independently search the coefficient C space.

Lines 89-97 then print out the optimal parameters values for our standard Logistic Regression classifier.

Now we can move on to our pipeline: a BernoulliRBM and a LogisticRegression classifier used together.

# initialize the RBM + Logistic Regression pipeline

rbm = BernoulliRBM()

logistic = LogisticRegression()

classifier = Pipeline([("rbm", rbm), ("logistic", logistic)])

# perform a grid search on the learning rate, number of

# iterations, and number of components on the RBM and

# C for Logistic Regression

print "SEARCHING RBM + LOGISTIC REGRESSION"

params = {

"rbm__learning_rate": [0.1, 0.01, 0.001],

"rbm__n_iter": [20, 40, 80],

"rbm__n_components": [50, 100, 200],

"logistic__C": [1.0, 10.0, 100.0]}

# perform a grid search over the parameter

start = time.time()

gs = GridSearchCV(classifier, params, n_jobs = -1, verbose = 1)

gs.fit(trainX, trainY)

# print diagnostic information to the user and grab the

# best model

print "\ndone in %0.3fs" % (time.time() - start)

print "best score: %0.3f" % (gs.best_score_)

print "RBM + LOGISTIC REGRESSION PARAMETERS"

bestParams = gs.best_estimator_.get_params()

# loop over the parameters and print each of them out

# so they can be manually set

for p in sorted(params.keys()):

print "\t %s: %f" % (p, bestParams[p])

# show a reminder message

print "\nIMPORTANT"

print "Now that your parameters have been searched, manually set"

print "them and re-run this script with --search 0"

We define our pipeline on Lines 100-102, consisting of our Restricted Boltzmann Machine and a Logistic Regression classifier.

However, now we have more parameters to search over than just the coefficient C of the Logistic Regression classifier. Now we also have to search over the number of iterations, number of components (i.e. the size of the resulting feature space), and the learning rate of the RBM. We define this search space on Lines 108-112.

We start on the grid search on Lines 115-117.

The optimal parameters for the pipeline are then displayed on Lines 121-129.

To determine the optimal values for our pipeline, execute the following command:

$ python rbm.py --dataset data/digits.csv --test 0.4 --search 1

You might want to make a cup of coffee or go for nice long walk while the grid space is searched. For each of our parameter selections, a model has to be trained and cross-validated. It’s definitely not a fast operation. But it’s the price you pay for optimal parameters, which are crucial when utilizing a Restricted Boltzmann Machine.

After a long walk, you should see that the following optimal values have been selected:

rbm__learning_rate: 0.01 rbm__n_iter: 40 rbm__n_components: 200 logistic__C: 1.0

Awesome. Our hyper-parameters have been tuned.

Let’s set these parameters and evaluate our classification pipeline:

# otherwise, use the manually specified parameters

else:

# evaluate using Logistic Regression and only the raw pixel

# features (these parameters were cross-validated)

logistic = LogisticRegression(C = 1.0)

logistic.fit(trainX, trainY)

print "LOGISTIC REGRESSION ON ORIGINAL DATASET"

print classification_report(testY, logistic.predict(testX))

# initialize the RBM + Logistic Regression classifier with

# the cross-validated parameters

rbm = BernoulliRBM(n_components = 200, n_iter = 40,

learning_rate = 0.01, verbose = True)

logistic = LogisticRegression(C = 1.0)

# train the classifier and show an evaluation report

classifier = Pipeline([("rbm", rbm), ("logistic", logistic)])

classifier.fit(trainX, trainY)

print "RBM + LOGISTIC REGRESSION ON ORIGINAL DATASET"

print classification_report(testY, classifier.predict(testX))

# nudge the dataset and then re-evaluate

print "RBM + LOGISTIC REGRESSION ON NUDGED DATASET"

(testX, testY) = nudge(testX, testY)

print classification_report(testY, classifier.predict(testX))

To obtain a baseline accuracy, we’ll train a standard Logistic Regression classifier on the raw pixel feature vectors (no unsupervised learning) on Lines 140 and 141. The accuracy of the baseline is then printed out on Line 143 using the classification_report function.

We then construct our BernoulliRBM + LogisticRegression classifier pipeline and evaluate it on our testing data on Lines 147-155.

But what happens when we nudge our testing set by translating each image one pixel up, down, left, and right?

To find out, we nudge our dataset on Line 162 and then re-evaluate it on Line 163.

To evaluate our system, issue the following command:

$ python rbm.py --dataset data/digits.csv --test 0.4

After a few minutes, we should have some results to look at.

Results

The first set of results is our Logistic Regression classifier trained strictly on the raw pixel feature vectors:

LOGISTIC REGRESSION ON ORIGINAL DATASET

precision recall f1-score support

0 0.94 0.96 0.95 196

1 0.94 0.97 0.95 245

2 0.89 0.90 0.90 197

3 0.88 0.84 0.86 202

4 0.90 0.93 0.91 193

5 0.85 0.75 0.80 183

6 0.91 0.93 0.92 194

7 0.90 0.90 0.90 212

8 0.85 0.83 0.84 186

9 0.81 0.84 0.83 192

avg / total 0.89 0.89 0.89 2000

Using this approach, we were able to achieve 89% accuracy. Not bad for using just the pixel intensities as our feature vectors.

But look what happens when we train our Restricted Boltzmann Machine + Logistic Regression pipeline:

RBM + LOGISTIC REGRESSION ON ORIGINAL DATASET

precision recall f1-score support

0 0.95 0.98 0.97 196

1 0.97 0.96 0.97 245

2 0.92 0.95 0.94 197

3 0.93 0.91 0.92 202

4 0.92 0.95 0.94 193

5 0.95 0.86 0.90 183

6 0.95 0.95 0.95 194

7 0.93 0.91 0.92 212

8 0.91 0.90 0.91 186

9 0.86 0.90 0.88 192

avg / total 0.93 0.93 0.93 2000

Our accuracy is able to increase from 89% to 93%! That’s definitely a significant jump!

But now the problem starts…

What happens when we nudge the dataset, translating each image one pixel up, down, left, and right?

I mean, these shifts are so small they would be barely (if at all) recognizable to the human eye.

Surely that can’t be a problem, can it?

Well, it turns out, it is:

RBM + LOGISTIC REGRESSION ON NUDGED DATASET

precision recall f1-score support

0 0.94 0.93 0.94 784

1 0.96 0.89 0.93 980

2 0.87 0.91 0.89 788

3 0.85 0.85 0.85 808

4 0.88 0.92 0.90 772

5 0.86 0.80 0.83 732

6 0.90 0.91 0.90 776

7 0.86 0.90 0.88 848

8 0.80 0.85 0.82 744

9 0.84 0.79 0.81 768

avg / total 0.88 0.88 0.88 8000

After nudging our dataset the RBM + Logistic Regression pipeline drops down to 88% accuracy. 5% below the original testing set and 1% below the baseline Logistic Regression classifier.

So now you can see the issue of using raw pixel intensities as feature vectors. Even tiny shifts in the image can cause accuracy to drop.

But don’t worry, there are ways to fix this issue.

How Do We Fix the Translation Problem?

There are two ways that researchers in neural networks, deep nets, and convolutional networks address the shifting and translation problem.

The first way is to generate extra data at training time.

In this post, we nudged our dataset after training to see its affect on classification accuracy. However, we can also nudge our dataset before training in an attempt to make our model more robust.

The second method is to randomly select regions from our training images, rather than using them in their entirety.

For example, instead of using the entire 28 x 28 image, we could randomly sample a 24 x 24 region from the image. Done enough times, over enough training images, we can mitigate the translation issue.

What's next? We recommend PyImageSearch University.

84 total classes • 114+ hours of on-demand code walkthrough videos • Last updated: February 2024

★★★★★ 4.84 (128 Ratings) • 16,000+ Students Enrolled

I strongly believe that if you had the right teacher you could master computer vision and deep learning.

Do you think learning computer vision and deep learning has to be time-consuming, overwhelming, and complicated? Or has to involve complex mathematics and equations? Or requires a degree in computer science?

That’s not the case.

All you need to master computer vision and deep learning is for someone to explain things to you in simple, intuitive terms. And that’s exactly what I do. My mission is to change education and how complex Artificial Intelligence topics are taught.

If you're serious about learning computer vision, your next stop should be PyImageSearch University, the most comprehensive computer vision, deep learning, and OpenCV course online today. Here you’ll learn how to successfully and confidently apply computer vision to your work, research, and projects. Join me in computer vision mastery.

Inside PyImageSearch University you'll find:

- ✓ 84 courses on essential computer vision, deep learning, and OpenCV topics

- ✓ 84 Certificates of Completion

- ✓ 114+ hours of on-demand video

- ✓ Brand new courses released regularly, ensuring you can keep up with state-of-the-art techniques

- ✓ Pre-configured Jupyter Notebooks in Google Colab

- ✓ Run all code examples in your web browser — works on Windows, macOS, and Linux (no dev environment configuration required!)

- ✓ Access to centralized code repos for all 536+ tutorials on PyImageSearch

- ✓ Easy one-click downloads for code, datasets, pre-trained models, etc.

- ✓ Access on mobile, laptop, desktop, etc.

Summary

In this blog post we’ve demonstrated that even small, one pixel translations in images that are nearly indistinguishable to the human eye are able to hurt the performance of our classification pipeline.

The reason we see this drop in accuracy is because we are utilizing raw pixel intensities as feature vectors.

Furthermore, translations are not the only deformation that can cause loss in accuracy when utilizing raw pixel intensities as features. Rotations, transformations, and even noise when capturing the image can have a negative impact on model performance.

In order to handle these situations we can (1) generate additional training data in an attempt to make our model more robust and/or (2) sample randomly from the image rather than using it in its entirety.

In practice, neural nets and deep learning approaches are substantially more robust than this experiment. By stacking layers and learning a set of convolutional kernels, deep nets are able to handle many of these issues.

Still, this experiment is important if you are just starting out and using raw pixel intensities as your feature vectors.

Either be prepared to spend a lot of time pre-processing your data, or be sure you know how to utilize classification models to handle situations where your data may not be as “clean” and nicely pre-processed as you want it.

Download the Source Code and FREE 17-page Resource Guide

Enter your email address below to get a .zip of the code and a FREE 17-page Resource Guide on Computer Vision, OpenCV, and Deep Learning. Inside you'll find my hand-picked tutorials, books, courses, and libraries to help you master CV and DL!