Today I’ve got a little trick for you, straight out of the PyImageSearch vault.

This trick is really awesome — and in many cases, it completely alleviates the need to tune the parameters to your Canny edge detectors. But before we get into that, let’s discuss the Canny edge detector a bit.

OpenCV and Python versions:

This example will run on Python 2.7/Python 3.4+ and OpenCV 2.4.X/OpenCV 3.0+.

The Canny Edge Detector

In previous posts we’ve used the Canny edge detector a fair amount of times. We’ve used it to build a kick-ass mobile document scanner and we’ve used to find a Game Boy screen in a photo, just two name a couple instances.

The Canny edge detector was developed way back in 1986 by John F. Canny. And it’s still widely used today was one of the default edge detectors in image processing.

The Canny edge detection algorithm can be broken down into 5 steps:

- Step 1: Smooth the image using a Gaussian filter to remove high frequency noise.

- Step 2: Compute the gradient intensity representations of the image.

- Step 3: Apply non-maximum suppression to remove “false” responses to to edge detection.

- Step 4: Apply thresholding using a lower and upper boundary on the gradient values.

- Step 5: Track edges using hysteresis by suppressing weak edges that are not connected to strong edges.

If you’re familiar with the OpenCV implementation of the Canny edge detector you’ll know that the function signature looks like this:

cv2.canny(image, lower, upper)

Where image is the image that we want to detect edges in; and lower and upper are our integer thresholds for Step 4, respectively.

The problem becomes determining these lower and upper thresholds.

What is the optimal value for the thresholds?

This question is especially important when you are processing multiple images with different contents captured under varying lighting conditions.

In the remainder of this blog post I’ll show you a little trick that relies on basic statistics that you can apply to remove the manual tuning of the thresholds to Canny edge detection.

This trick will save you time parameter tuning — and you’ll still get a nice Canny edge map after applying the function.

To learn more about this zero-parameter, automatic Canny edge detection trick, read on.

Zero-parameter, automatic Canny edge detection with Python and OpenCV

Let’s go ahead and get started. Open up a new file in your favorite code editor, name it auto_canny.py , and let’s get started:

# import the necessary packages import numpy as np import argparse import glob import cv2 def auto_canny(image, sigma=0.33): # compute the median of the single channel pixel intensities v = np.median(image) # apply automatic Canny edge detection using the computed median lower = int(max(0, (1.0 - sigma) * v)) upper = int(min(255, (1.0 + sigma) * v)) edged = cv2.Canny(image, lower, upper) # return the edged image return edged

The first thing we’ll do is import our necessary packages. We’ll use NumPy to for numerical operations, argparse to parse command line arguments, glob to grab the paths to our images from disk, and cv2 for our OpenCV bindings.

We then define auto_canny , our automatic Canny edge detection function on Line 7. This function requires a single argument, image , which is the single-channel image that we want to detect images in. An optional argument, sigma can be used to vary the percentage thresholds that are determined based on simple statistics.

Line 9 handles computing the median of the pixel intensities in the image.

We then take this median value and construct two thresholds, lower and upper on Lines 12 and 13. These thresholds are constructed based on the +/- percentages controlled by the sigma argument.

A lower value of sigma indicates a tighter threshold, whereas a larger value of sigma gives a wider threshold. In general, you will not have to change this sigma value often. Simply select a single, default sigma value and apply it to your entire dataset of images.

Note: In practice, sigma=0.33 tends to give good results on most of the dataset I’m working with, so I choose to supply 33% as the default sigma value.

Now that we have our lower and upper thresholds, we then apply the Canny edge detector on Line 14 and return it to the calling function on Line 17.

Let’s keep moving with this example and see how we can apply it to our images:

# construct the argument parse and parse the arguments

ap = argparse.ArgumentParser()

ap.add_argument("-i", "--images", required=True,

help="path to input dataset of images")

args = vars(ap.parse_args())

# loop over the images

for imagePath in glob.glob(args["images"] + "/*.jpg"):

# load the image, convert it to grayscale, and blur it slightly

image = cv2.imread(imagePath)

gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)

blurred = cv2.GaussianBlur(gray, (3, 3), 0)

# apply Canny edge detection using a wide threshold, tight

# threshold, and automatically determined threshold

wide = cv2.Canny(blurred, 10, 200)

tight = cv2.Canny(blurred, 225, 250)

auto = auto_canny(blurred)

# show the images

cv2.imshow("Original", image)

cv2.imshow("Edges", np.hstack([wide, tight, auto]))

cv2.waitKey(0)

We parse our command line arguments on Lines 20-23. We only need a single switch here, --images , which is the path to the directory containing the images we want to process.

We then loop over the images in our directory on Line 26, load the image from disk on Line 28, convert the image to grayscale on Line 29, and apply a Gaussian blur with a 3 x 3 kernel to help remove high frequency noise on Line 30.

Lines 34-36 then apply Canny edge detection using three methods:

- A wide threshold.

- A tight threshold.

- A threshold determined automatically using our

auto_cannyfunction.

Finally, our resulting images are displayed to us on Lines 39-41.

The auto_canny function in action

Alright, enough talk about code. Let’s see our auto_canny function in action.

Open up a terminal and execute the following command:

$ python auto_canny.py --images images

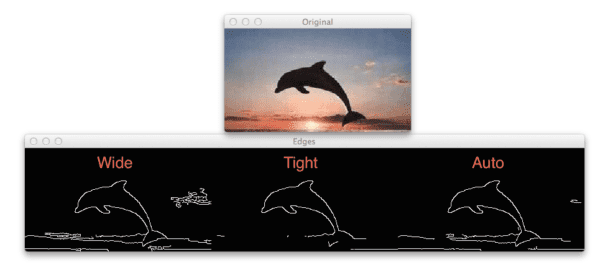

You should then see the following output:

As you can see, the wide Canny edge threshold not only detects the dolphin, but also many of the clouds in the image. The tight threshold does not detect the clouds, but misses out on the dolphin tail. Finally, the automatic method is able to find all of the dolphin, while removing many of the cloud edges.

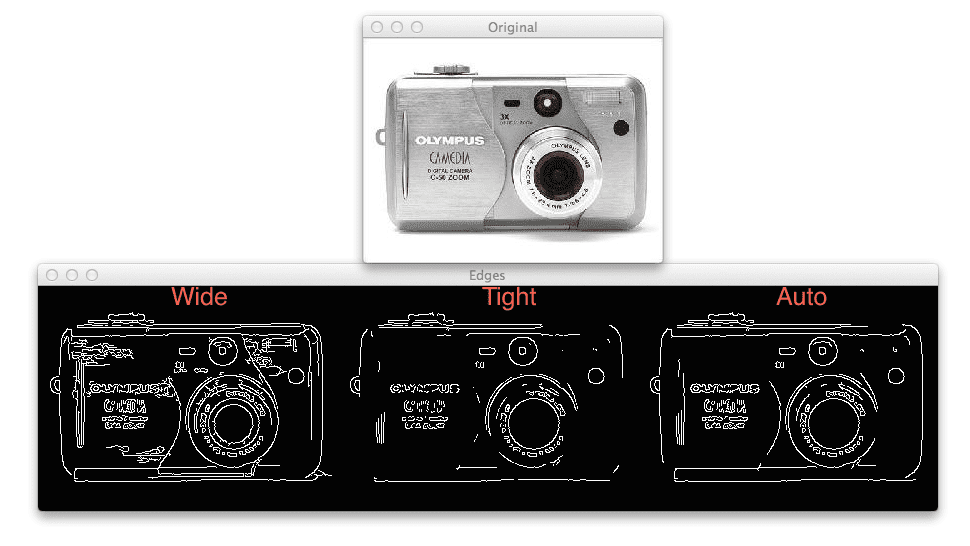

Let’s try another image:

The wide Canny threshold on the left includes high frequency noise based on the reflection of the light on the brushed metal of the camera, whereas the tight threshold in the center misses out on many of the structural edges on the camera. Finally, the automatic method on the right is able to find many of the structural edges while not including the high frequency noise.

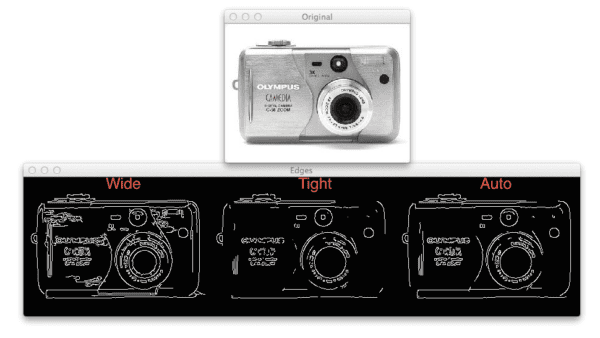

One more example:

The results here are fairly dramatic. While both the wide (left) and the automatic (right) Canny edge detection methods perform similarly, the tight threshold (center) misses out on almost all of the structural edges of the cup.

Given the examples above, it’s clear that the automatic, zero-parameter version of the Canny edge detection obtains the best results with the least effort.

Note: The three example images were taken from the CALTECH-101 dataset.

What's next? We recommend PyImageSearch University.

86+ total classes • 115+ hours hours of on-demand code walkthrough videos • Last updated: May 2026

★★★★★ 4.84 (128 Ratings) • 16,000+ Students Enrolled

I strongly believe that if you had the right teacher you could master computer vision and deep learning.

Do you think learning computer vision and deep learning has to be time-consuming, overwhelming, and complicated? Or has to involve complex mathematics and equations? Or requires a degree in computer science?

That’s not the case.

All you need to master computer vision and deep learning is for someone to explain things to you in simple, intuitive terms. And that’s exactly what I do. My mission is to change education and how complex Artificial Intelligence topics are taught.

If you're serious about learning computer vision, your next stop should be PyImageSearch University, the most comprehensive computer vision, deep learning, and OpenCV course online today. Here you’ll learn how to successfully and confidently apply computer vision to your work, research, and projects. Join me in computer vision mastery.

Inside PyImageSearch University you'll find:

- ✓ 86+ courses on essential computer vision, deep learning, and OpenCV topics

- ✓ 86 Certificates of Completion

- ✓ 115+ hours hours of on-demand video

- ✓ Brand new courses released regularly, ensuring you can keep up with state-of-the-art techniques

- ✓ Pre-configured Jupyter Notebooks in Google Colab

- ✓ Run all code examples in your web browser — works on Windows, macOS, and Linux (no dev environment configuration required!)

- ✓ Access to centralized code repos for all 540+ tutorials on PyImageSearch

- ✓ Easy one-click downloads for code, datasets, pre-trained models, etc.

- ✓ Access on mobile, laptop, desktop, etc.

Summary

In this blog post I showed you a simple trick to (reliably) automatically detect edges in images using the Canny edge detector, without providing thresholds to the function.

This trick simply takes the median of the image, and then constructs upper and lower thresholds based on a percentage of this median. In practice, sigma=0.33 tends to obtain good results.

In general, you’ll find that the automatic, zero-parameter version of the Canny edge detection is able to obtain fairly decent results with little-to-no effort on your part.

With this in mind, why not download the source code to this post and give it a shot on your own images? I would be curious to hear about your results!

Download the Source Code and FREE 17-page Resource Guide

Enter your email address below to get a .zip of the code and a FREE 17-page Resource Guide on Computer Vision, OpenCV, and Deep Learning. Inside you'll find my hand-picked tutorials, books, courses, and libraries to help you master CV and DL!