Today’s blog post is Part II in our two part series on OCR’ing bank check account and routing numbers using OpenCV, Python, and computer vision techniques.

Last week we learned how to extract MICR E-13B digits and symbols from input images. Today we are going to take this knowledge and use it to actually recognize each of the characters, thereby allowing us to OCR the actual bank check and routing number.

To learn how to OCR bank checks with Python and OpenCV, just keep reading.

Bank check OCR with OpenCV and Python

In Part I of this series we learned how to localize each of the fourteen MICR E-13B font characters used on bank checks.

Ten of these characters are digits, which form our actual account number and routing number. The remaining four characters are special symbols used by the bank to mark separations between routing numbers, account numbers, and any other information encoded on the check.

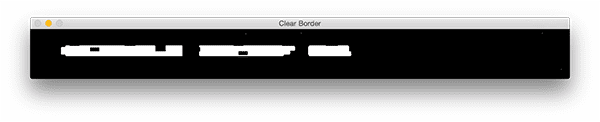

The image below displays all fourteen characters that we will be OCR’ing in this tutorial:

The list below displays the four symbols:

- ⑆ Transit (delimit bank branch routing transit #)

- ⑈ On-us (delimit customer account number)

- ⑇ Amount (delimit transaction amount)

- ⑉ Dash (delimit parts of numbers, such as routing or account)

Since OpenCV does not allow us to draw Unicode characters on images, we’ll use the following ASCII character mappings in our code to indicate the Transit, Amount, On-us, and Dash:

- T = ⑆

- U = ⑈

- A = ⑇

- D = ⑉

Now that we are able to actually localize the digits and symbols, we can apply template matching in a similar manner as we did in our credit card OCR post in order to perform OCR.

Reading account and routing numbers using OpenCV

In order to build our bank check OCR system, we’ll be reusing some of the code from last week. If you haven’t already read Part I of this series, take the time now to go back and read through it — the explanation of the extract_digitis_and_symbols function is especially important and critical to localizing the bank check characters.

With that said, let’s go ahead and open a new file, name it bank_check_ocr.py , and insert the following code:

# import the necessary packages from skimage.segmentation import clear_border from imutils import contours import numpy as np import argparse import imutils import cv2

Lines 2-7 handle our standard imports. If you’re familiar with this blog, these imports should be nothing new. If you don’t have any of these packages on your system, you can perform the following to get them installed:

- Install OpenCV using the relevant instructions for your system (while ensuring you’re following any Python virtualenv commands).

- Activate your Python virtualenv and install packages:

$ workon cv$ pip install numpy$ pip install skimage$ pip install imutils

Note: for any of the pip commands you may use the --upgrade flag to update whether or not you already have the software installed.

Now that we’ve got our dependencies installed, let’s quickly review the function covered last week in Part I of this series:

def extract_digits_and_symbols(image, charCnts, minW=5, minH=15): # grab the internal Python iterator for the list of character # contours, then initialize the character ROI and location # lists, respectively charIter = charCnts.__iter__() rois = [] locs = [] # keep looping over the character contours until we reach the end # of the list while True: try: # grab the next character contour from the list, compute # its bounding box, and initialize the ROI c = next(charIter) (cX, cY, cW, cH) = cv2.boundingRect(c) roi = None # check to see if the width and height are sufficiently # large, indicating that we have found a digit if cW >= minW and cH >= minH: # extract the ROI roi = image[cY:cY + cH, cX:cX + cW] rois.append(roi) locs.append((cX, cY, cX + cW, cY + cH))

This function has one goal — to find and localize digits and symbols based on contours. This is accomplished via iterating through the contours list, charCnts , and keeping track of the regions of interest and ROI locations (rois and locs ) in two lists that are returned at the end of the function.

On Line 29 we check to see if the bounding rectangle of the contour is at least as wide and tall as a digit. If it is, we extract and append the roi (Lines 31 and 32) followed by appending the location of the ROI to locs (Line 33). Otherwise, we take the following actions:

# otherwise, we are examining one of the special symbols else: # MICR symbols include three separate parts, so we # need to grab the next two parts from our iterator, # followed by initializing the bounding box # coordinates for the symbol parts = [c, next(charIter), next(charIter)] (sXA, sYA, sXB, sYB) = (np.inf, np.inf, -np.inf, -np.inf) # loop over the parts for p in parts: # compute the bounding box for the part, then # update our bookkeeping variables (pX, pY, pW, pH) = cv2.boundingRect(p) sXA = min(sXA, pX) sYA = min(sYA, pY) sXB = max(sXB, pX + pW) sYB = max(sYB, pY + pH) # extract the ROI roi = image[sYA:sYB, sXA:sXB] rois.append(roi) locs.append((sXA, sYA, sXB, sYB))

In the above code block, we have determined that a contour is part of a special symbol (such as Transit, Dash, etc.). In this case, we take the current contour and the next two contours (using Python iterators which we discussed last week) on Line 41.

These parts of a special symbol are looped over so that we can calculate the bounding box for extracting the roi around all three contours (Lines 46-53). Then, as we did before, we extract the roi and append it to rois (Lines 56 and 57) followed by appending its location to locs (Line 58).

Finally, we need to catch a StopIteration exception to gracefully exit our function:

# we have reached the end of the iterator; gracefully break # from the loop except StopIteration: break # return a tuple of the ROIs and locations return (rois, locs)

Once we have reached the end of the charCnts list (and there are no further entries in the list), a next call on charCnts will result in a StopIteration exception being throw. Catching this exception allows us to break from our loop (Lines 62 and 63).

Finally, we return a 2-tuple containing rois and corresponding locs .

That was a quick recap of the extract_digits_and_symbols function — for a complete, detailed review, please refer to last week’s blog post.

Now it’s time to get to the new material. First, we’ll go through a couple code blocks that should also be a bit familiar:

# construct the argument parse and parse the arguments

ap = argparse.ArgumentParser()

ap.add_argument("-i", "--image", required=True,

help="path to input image")

ap.add_argument("-r", "--reference", required=True,

help="path to reference MICR E-13B font")

args = vars(ap.parse_args())

Lines 69-74 handle our command line argument parsing. In this script, we’ll make use of both the input --image and --reference MICR E-13B font image.

Let’s initialize our special characters (since they can’t be represented with Unicode in OpenCV) as well as pre-process our reference image:

# initialize the list of reference character names, in the same # order as they appear in the reference image where the digits # their names and: # T = Transit (delimit bank branch routing transit #) # U = On-us (delimit customer account number) # A = Amount (delimit transaction amount) # D = Dash (delimit parts of numbers, such as routing or account) charNames = ["1", "2", "3", "4", "5", "6", "7", "8", "9", "0", "T", "U", "A", "D"] # load the reference MICR image from disk, convert it to grayscale, # and threshold it, such that the digits appear as *white* on a # *black* background ref = cv2.imread(args["reference"]) ref = cv2.cvtColor(ref, cv2.COLOR_BGR2GRAY) ref = imutils.resize(ref, width=400) ref = cv2.threshold(ref, 0, 255, cv2.THRESH_BINARY_INV | cv2.THRESH_OTSU)[1]

Lines 83 and 84 build a list of the character names including digits and special symbols.

Then, we load the --reference image while converting to grayscale and resizing, followed by inverse thresholding (Lines 89-93).

Below you can see the output of pre-processing our reference image:

Now we’re ready to find and sort contours in ref :

# find contours in the MICR image (i.e,. the outlines of the # characters) and sort them from left to right refCnts = cv2.findContours(ref.copy(), cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE) refCnts = imutils.grab_contours(refCnts) refCnts = contours.sort_contours(refCnts, method="left-to-right")[0]

Reference image contours are computed on Lines 97 and 98 followed by updating the refCnts depending on which OpenCV version we are running (Line 99).

We sort the refCnts from left to right on Line 100.

At this point, we have our reference contours in an organized fashion. The next step is to extract the digits and symbols followed by building a dictionary of character ROIs:

# extract the digits and symbols from the list of contours, then

# initialize a dictionary to map the character name to the ROI

refROIs = extract_digits_and_symbols(ref, refCnts,

minW=10, minH=20)[0]

chars = {}

# loop over the reference ROIs

for (name, roi) in zip(charNames, refROIs):

# resize the ROI to a fixed size, then update the characters

# dictionary, mapping the character name to the ROI

roi = cv2.resize(roi, (36, 36))

chars[name] = roi

We call the extract_digits_and_symbols function on Lines 104 and 105 providing the ref image and refCnts .

We then initialize a chars dictionary on Line 106. We populate this dictionary in the loop spanning Lines 109-113. In the dictionary, the character name (key) is associated with the roi image (value).

Next, we’ll instantiate a kernel and load and extract the bottom 20% of the check image which contains the account number:

# initialize a rectangular kernel (wider than it is tall) along with # an empty list to store the output of the check OCR rectKernel = cv2.getStructuringElement(cv2.MORPH_RECT, (17, 7)) output = [] # load the input image, grab its dimensions, and apply array slicing # to keep only the bottom 20% of the image (that's where the account # information is) image = cv2.imread(args["image"]) (h, w,) = image.shape[:2] delta = int(h - (h * 0.2)) bottom = image[delta:h, 0:w]

We’ll apply a rectangular kernel to perform some morphological operations (initialized on Line 117). We also initialize an output list to contain the characters at the bottom of the check. We’ll print these characters to the terminal and also draw them on the check image later.

Lines 123-126 simply load the image , grab the dimensions, and extract the bottom 20% of the check image.

Note: This is not rotation invariant — if your check could possibly be rotated, appearing upside down or vertical, then you will need to add logic in to rotate it first. Applying a top-down perspective transform on the check (such as in our document scanner post) can help with task.

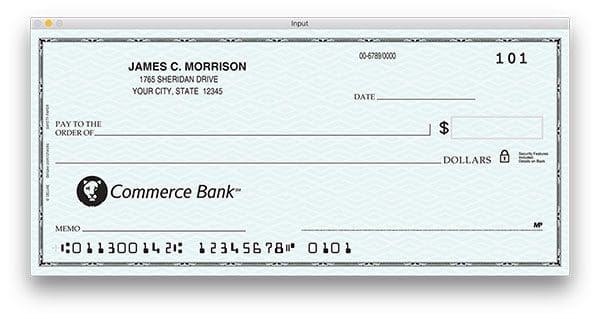

Below you can find our example check input image:

Next, let’s convert the check to grayscale and apply a morphological transformation:

# convert the bottom image to grayscale, then apply a blackhat # morphological operator to find dark regions against a light # background (i.e., the routing and account numbers) gray = cv2.cvtColor(bottom, cv2.COLOR_BGR2GRAY) blackhat = cv2.morphologyEx(gray, cv2.MORPH_BLACKHAT, rectKernel)

On Line 131 we convert the bottom of the check image to grayscale and on Line 132 we use the blackhat morphological operator to find dark regions against a light background. This operation makes use of our rectKernel .

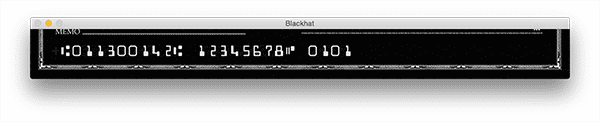

The result reveal our account and routing numbers:

Now let’s compute the Scharr gradient in the x-direction:

# compute the Scharr gradient of the blackhat image, then scale

# the rest back into the range [0, 255]

gradX = cv2.Sobel(blackhat, ddepth=cv2.CV_32F, dx=1, dy=0,

ksize=-1)

gradX = np.absolute(gradX)

(minVal, maxVal) = (np.min(gradX), np.max(gradX))

gradX = (255 * ((gradX - minVal) / (maxVal - minVal)))

gradX = gradX.astype("uint8")

Using our blackhat operator, we compute the Scharr gradient with the cv2.Sobel function (Lines 136 and 137). We take the element-wise absolute value of gradX on on Line 138.

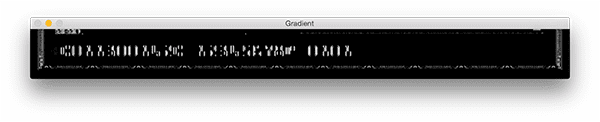

Then we scale the gradX to the range [0-255] on Lines 139-141:

Let’s see if we can close the gaps between the characters and binarize the image:

# apply a closing operation using the rectangular kernel to help # cloes gaps in between rounting and account digits, then apply # Otsu's thresholding method to binarize the image gradX = cv2.morphologyEx(gradX, cv2.MORPH_CLOSE, rectKernel) thresh = cv2.threshold(gradX, 0, 255, cv2.THRESH_BINARY | cv2.THRESH_OTSU)[1]

On Line 146, we utilize our kernel again while applying a closing operation. We follow this by performing a binary threshold on Lines 147 and 148.

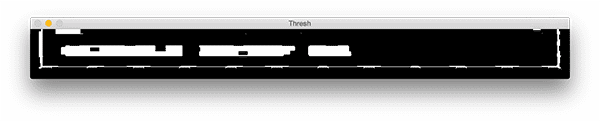

The result of this operation can be seen below:

When pre-processing a check image our morphological + thresholding operations will undoubtedly leave “false-positive” detection regions — we can apply a bit of extra processing to help remove these operations:

# remove any pixels that are touching the borders of the image (this # simply helps us in the next step when we prune contours) thresh = clear_border(thresh)

Line 152 simply clears the border by removing image border pixels; the result is subtle but will prove to be very helpful:

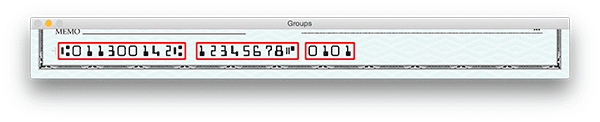

As the image above displays, we have clearly found our three groupings of numbers on the check. But how did we go about actually extracting each of the individual groups? The following code block will show us how:

# find contours in the thresholded image, then initialize the # list of group locations groupCnts = cv2.findContours(thresh.copy(), cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE) groupCnts = imutils.grab_contours(groupCnts) groupLocs = [] # loop over the group contours for (i, c) in enumerate(groupCnts): # compute the bounding box of the contour (x, y, w, h) = cv2.boundingRect(c) # only accept the contour region as a grouping of characters if # the ROI is sufficiently large if w > 50 and h > 15: groupLocs.append((x, y, w, h)) # sort the digit locations from left-to-right groupLocs = sorted(groupLocs, key=lambda x:x[0])

On Lines 156-158 we find our contours also take care of the pesky OpenCV version incompatibility.

Next, we initialize a list to contain our number group locations (Line 159).

Looping over the groupCnts , we determine the contour bounding box (Line 164), and check to see if the box parameters qualify as a grouping of characters — if they are, we append the ROI values to groupLocs (Lines 168 and 169).

Using lambdas, we sort the digit locations from left to right (Line 172).

Our group regions are shown on this image:

Next, let’s loop over the group locations:

# loop over the group locations

for (gX, gY, gW, gH) in groupLocs:

# initialize the group output of characters

groupOutput = []

# extract the group ROI of characters from the grayscale

# image, then apply thresholding to segment the digits from

# the background of the credit card

group = gray[gY - 5:gY + gH + 5, gX - 5:gX + gW + 5]

group = cv2.threshold(group, 0, 255,

cv2.THRESH_BINARY_INV | cv2.THRESH_OTSU)[1]

cv2.imshow("Group", group)

cv2.waitKey(0)

# find character contours in the group, then sort them from

# left to right

charCnts = cv2.findContours(group.copy(), cv2.RETR_EXTERNAL,

cv2.CHAIN_APPROX_SIMPLE)

charCnts = imutils.grab_contours(charCnts)

charCnts = contours.sort_contours(charCnts,

method="left-to-right")[0]

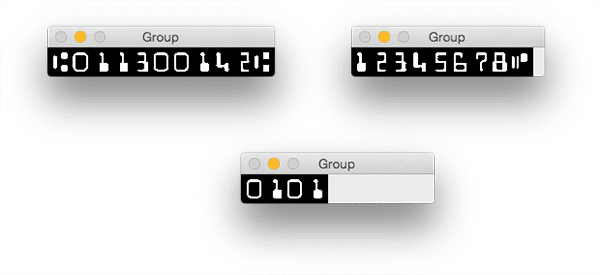

In the loop, first, we initialize a groupOutput list which will later be appended to the output list (Line 177).

Subsequently, we extract the character grouping ROI from the image (Line 182) and threshold it (Lines 183 and 184).

For developmental and debugging purposes (Lines 186 and 187) we show the group to the screen and wait for a keypress before moving onward (feel free to remove this code from your script if you so wish).

We find and sort character contours within the group on Lines 191-195. The results of this step are shown in Figure 10.

Now, let’s extract digits and symbols with our function and then loop over the rois :

# find the characters and symbols in the group (rois, locs) = extract_digits_and_symbols(group, charCnts) # loop over the ROIs from the group for roi in rois: # initialize the list of template matching scores and # resize the ROI to a fixed size scores = [] roi = cv2.resize(roi, (36, 36)) # loop over the reference character name and corresponding # ROI for charName in charNames: # apply correlation-based template matching, take the # score, and update the scores list result = cv2.matchTemplate(roi, chars[charName], cv2.TM_CCOEFF) (_, score, _, _) = cv2.minMaxLoc(result) scores.append(score) # the classification for the character ROI will be the # reference character name with the *largest* template # matching score groupOutput.append(charNames[np.argmax(scores)])

On Line 198, we provide the group and charCnts to the extract_digits_and_symbols function, which returns rois and locs .

We loop over the rois , first initializing a template matching score list, followed by resizing the roi to known dimensions.

We loop over the character names and perform template matching which compares the query image roi to the possible character images (they are stored in the chars dictionary and indexed by charName ) on Lines 212 and 213.

To extract a template matching score for this operation, we use the cv2.minMaxLoc function, and subsequently, we append it to scores on Line 215.

The last step in this code block is to take the maximum score from scores and use it to find the character name — we append the result to groupOutput (Line 220).

You can read more about this template matching-based approach to OCR in our previous blog post on Credit Card OCR.

Next, we’ll draw on the original image append the groupOutput result to a list named output .

# draw (padded) bounding box surrounding the group along with

# the OCR output of the group

cv2.rectangle(image, (gX - 10, gY + delta - 10),

(gX + gW + 10, gY + gY + delta), (0, 0, 255), 2)

cv2.putText(image, "".join(groupOutput),

(gX - 10, gY + delta - 25), cv2.FONT_HERSHEY_SIMPLEX,

0.95, (0, 0, 255), 3)

# add the group output to the overall check OCR output

output.append("".join(groupOutput))

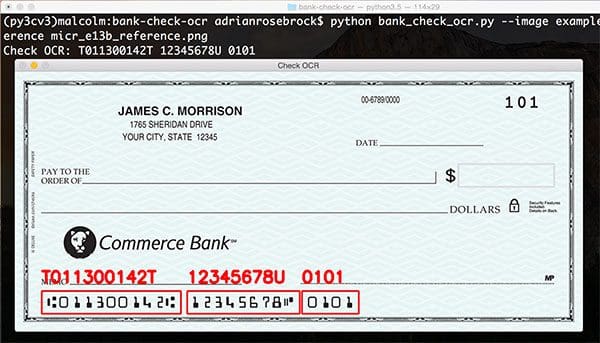

Lines 224 and 225 handle drawing a red rectangle around the groups and Lines 226-228 draw the group output characters (routing, checking, and check numbers) on the image.

Finally, we append the groupOutput characters to an output string (Line 231).

Our final step is to write the OCR text to our terminal and display the final output image:

# display the output check OCR information to the screen

print("Check OCR: {}".format(" ".join(output)))

cv2.imshow("Check OCR", image)

cv2.waitKey(0)

We print the OCR results to the terminal, display the image to the screen, and wait until a key is pressed to exit on Lines 234-236.

Let’s see how our bank check OCR system performs in the next section.

Bank check OCR results

To apply our bank check OCR algorithm, make sure you use the “Downloads” section of this blog post to download the source code + example image.

From there, execute the following command:

$ python bank_check_ocr.py --image example_check.png \ --reference micr_e13b_reference.png

The results of our hard work can be seen below:

Improving our bank check OCR system

In this particular example, we were able to get away with using basic template matching as our character recognition algorithm.

However, template matching is not the most reliable method for character recognition, especially for real-world images that are likely to be much noisier and harder to segment.

In these cases, it would be best to train your own HOG + Linear SVM classifier or a Convolutional Neural Network. To accomplish this, you’ll want to create a dataset of check images and manually label and extract each digit in the image. I would recommend having 1,000-5,000 digits per character and then training your classifier.

From there, you’ll be able to enjoy much higher character classification accuracy — the biggest problem is simply creating/obtaining such a dataset.

Since checks by their very nature contain sensitive information, it’s often hard to find a dataset that is not only (1) representative of real-world bank check images but is also (2) cheap/easy to license.

Many of these datasets belong to the banks themselves, making it hard for computer vision researchers and developers to work with them.

What's next? We recommend PyImageSearch University.

86+ total classes • 115+ hours hours of on-demand code walkthrough videos • Last updated: May 2026

★★★★★ 4.84 (128 Ratings) • 16,000+ Students Enrolled

I strongly believe that if you had the right teacher you could master computer vision and deep learning.

Do you think learning computer vision and deep learning has to be time-consuming, overwhelming, and complicated? Or has to involve complex mathematics and equations? Or requires a degree in computer science?

That’s not the case.

All you need to master computer vision and deep learning is for someone to explain things to you in simple, intuitive terms. And that’s exactly what I do. My mission is to change education and how complex Artificial Intelligence topics are taught.

If you're serious about learning computer vision, your next stop should be PyImageSearch University, the most comprehensive computer vision, deep learning, and OpenCV course online today. Here you’ll learn how to successfully and confidently apply computer vision to your work, research, and projects. Join me in computer vision mastery.

Inside PyImageSearch University you'll find:

- ✓ 86+ courses on essential computer vision, deep learning, and OpenCV topics

- ✓ 86 Certificates of Completion

- ✓ 115+ hours hours of on-demand video

- ✓ Brand new courses released regularly, ensuring you can keep up with state-of-the-art techniques

- ✓ Pre-configured Jupyter Notebooks in Google Colab

- ✓ Run all code examples in your web browser — works on Windows, macOS, and Linux (no dev environment configuration required!)

- ✓ Access to centralized code repos for all 540+ tutorials on PyImageSearch

- ✓ Easy one-click downloads for code, datasets, pre-trained models, etc.

- ✓ Access on mobile, laptop, desktop, etc.

Summary

In today’s blog post we learned how to apply back check OCR to images using OpenCV, Python, and template matching. In fact, this is the same method that we used for credit card OCR — the primary difference is that we had to take special care to extract each MICR E-13B symbol, especially when these symbols contain multiple contours.

However, while our template matching method worked correctly on this particular example image, real-world inputs are likely to be much more noisy, making it harder for us to extract the digits and symbols using simple contour techniques.

In these situations, it would be best to localize each of the digits and characters followed by applying machine learning to obtain higher digit classification accuracy. Methods such as Histogram of Oriented Gradients + Linear SVM and deep learning will obtain better digit and symbol recognition accuracy on real-world images that contain more noise.

If you are interested in learning more about HOG + Linear SVM along with deep learning, be sure to take a look at the PyImageSearch Gurus course.

And before you go, be sure to enter your email address in the form below to be notified when future blog posts are published!

Download the Source Code and FREE 17-page Resource Guide

Enter your email address below to get a .zip of the code and a FREE 17-page Resource Guide on Computer Vision, OpenCV, and Deep Learning. Inside you'll find my hand-picked tutorials, books, courses, and libraries to help you master CV and DL!