Today’s blog post is the long-awaited tutorial on real-time drowsiness detection on the Raspberry Pi!

$ workon cv $ pip install RPi.GPIO $ pip install gpiozero

From there, if you want to check that everything is installed properly in your virtual environment you may run the Python interpreter directly:

$ workon cv $ python >>> import RPi.GPIO >>> import gpiozero >>> import numpy >>> import dlib >>> import cv2 >>> import imutils

Note: I’ve made the assumption that the virtual environment you are using already has the above packages installed in it. My cv virtual environment has NumPy, dlib, OpenCV, and imutils already installed, so by using pip to install the RPi.GPIO and gpiozero to install the respective GPIO packages, I’m able to access all six libraries from within the same environment. You may pip install each of the packages (except for OpenCV). To install an optimized OpenCV on your Raspberry Pi, then just follow this previous post. If you are having trouble getting dlib installed, please follow this guide.

The driver drowsiness detection algorithm is identical to the one we implemented in our previous tutorial.

To start, we will apply OpenCV’s Haar cascades to detect the face in an image, which boils down to finding the bounding box (x, y)-coordinates of the face in the frame.

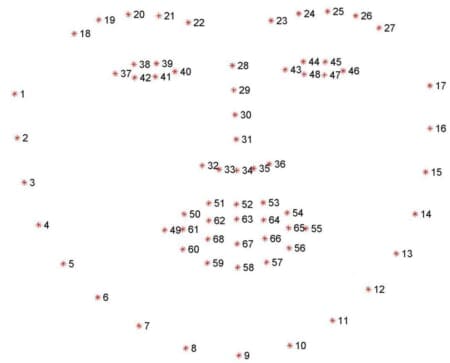

Given the bounding box the face we can apply dlib’s facial landmark predictor to obtain 68 salient points used to localize the eyes, eyebrows, nose, mouth, and jawline:

As I discuss in this tutorial, dlib’s 68 facial landmarks are indexable which enables us to extract the various facial structures using simple Python array slices.

Given the facial landmarks associated with an eye, we can apply the Eye Aspect Ratio (EAR) algorithm which was introduced by Soukupová and Čech’s in their 2017 paper, Real-Time Eye Blink Detection suing Facial Landmarks:

On the top-left we have an eye that is fully open and the eye facial landmarks plotted. Then on the top-right we have an eye that is closed. The bottom then plots the eye aspect ratio over time. As we can see, the eye aspect ratio is constant (indicating that the eye is open), then rapidly drops to close to zero, then increases again, indicating a blink has taken place.

You can read more about the blink detection algorithm and the eye aspect ratio in this post dedicated to blink detection.

In our drowsiness detector case, we’ll be monitoring the eye aspect ratio to see if the value falls but does not increase again, thus implying that the driver/user has closed their eyes.

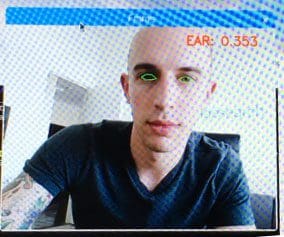

Once implemented, our algorithm will start by localizing the facial landmarks on extracting the eye regions:

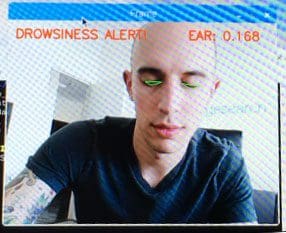

We can then monitor the eye aspect ratio to determine if the eyes are closed:

And then finally raising an alarm if the eye aspect ratio is below a pre-defined threshold for a sufficiently long amount of time (indicating that the driver/user is tired):

In the next section, we’ll implement the optimized drowsiness detection algorithm detailed above on the Raspberry Pi using OpenCV, dlib, and Python.

A real-time drowsiness detector on the Raspberry Pi with OpenCV and dlib

Open up a new file in your favorite editor or IDE and name it pi_drowsiness_detection.py . From there, let’s get started coding:

# import the necessary packages from imutils.video import VideoStream from imutils import face_utils import numpy as np import argparse import imutils import time import dlib import cv2

Lines 1-9 handle our imports — make sure you have each of these installed in your virtual environment.

From there let’s define a distance function:

def euclidean_dist(ptA, ptB): # compute and return the euclidean distance between the two # points return np.linalg.norm(ptA - ptB)

On Lines 11-14 we define a convenience function for calculating the Euclidean distance using NumPy. Euclidean is arguably the most well known and must used distance metric. The Euclidean distance is normally described as the distance between two points “as the crow flies”.

Now let’s define our Eye Aspect Ratio (EAR) function which is used to compute the ratio of distances between the vertical eye landmarks and the distances between the horizontal eye landmarks:

def eye_aspect_ratio(eye): # compute the euclidean distances between the two sets of # vertical eye landmarks (x, y)-coordinates A = euclidean_dist(eye[1], eye[5]) B = euclidean_dist(eye[2], eye[4]) # compute the euclidean distance between the horizontal # eye landmark (x, y)-coordinates C = euclidean_dist(eye[0], eye[3]) # compute the eye aspect ratio ear = (A + B) / (2.0 * C) # return the eye aspect ratio return ear

The return value will be approximately constant when the eye is open and will decrease towards zero during a blink. If the eye is closed, the eye aspect ratio will remain constant at a much smaller value.

From there, we need to parse our command line arguments:

# construct the argument parse and parse the arguments

ap = argparse.ArgumentParser()

ap.add_argument("-c", "--cascade", required=True,

help = "path to where the face cascade resides")

ap.add_argument("-p", "--shape-predictor", required=True,

help="path to facial landmark predictor")

ap.add_argument("-a", "--alarm", type=int, default=0,

help="boolean used to indicate if TrafficHat should be used")

args = vars(ap.parse_args())

We have defined two required arguments and one optional one on Lines 33-40:

--cascade: The path to the Haar cascade XML file used for face detection.--shape-predictor: The path to the dlib facial landmark predictor file.--alarm: A boolean to indicate if the TrafficHat buzzer should be used when drowsiness is detected.

Both the --cascade and --shape-predictor files are available in the “Downloads” section at the end of the post.

If the --alarm flag is set, we’ll set up the TrafficHat:

# check to see if we are using GPIO/TrafficHat as an alarm

if args["alarm"] > 0:

from gpiozero import TrafficHat

th = TrafficHat()

print("[INFO] using TrafficHat alarm...")

As shown in Lines 43-46 if the argument supplied is greater than 0, we’ll import the TrafficHat function to handle our buzzer alarm.

Let’s also define a set of important configuration variables:

# define two constants, one for the eye aspect ratio to indicate # blink and then a second constant for the number of consecutive # frames the eye must be below the threshold for to set off the # alarm EYE_AR_THRESH = 0.3 EYE_AR_CONSEC_FRAMES = 16 # initialize the frame counter as well as a boolean used to # indicate if the alarm is going off COUNTER = 0 ALARM_ON = False

The two constants on Lines 52 and 53 define the EAR threshold and number of consecutive frames eyes must be closed to be considered drowsy, respectively.

Then we initialize the frame counter and a boolean for the alarm (Lines 57 and 58).

From there we’ll load our Haar cascade and facial landmark predictor files:

# load OpenCV's Haar cascade for face detection (which is faster than

# dlib's built-in HOG detector, but less accurate), then create the

# facial landmark predictor

print("[INFO] loading facial landmark predictor...")

detector = cv2.CascadeClassifier(args["cascade"])

predictor = dlib.shape_predictor(args["shape_predictor"])

Line 64 differs from the face detector initialization from our previous post on drowsiness detection — here we use a faster detection algorithm (Haar cascades) while sacrificing accuracy. Haar cascades are faster than dlib’s face detector (which is HOG + Linear SVM-based) making it a great choice for the Raspberry Pi.

There are no changes to Line 65 where we load up dlib’s shape_predictor while providing the path to the file.

Next, we’ll initialize the indexes of the facial landmarks for each eye:

# grab the indexes of the facial landmarks for the left and # right eye, respectively (lStart, lEnd) = face_utils.FACIAL_LANDMARKS_IDXS["left_eye"] (rStart, rEnd) = face_utils.FACIAL_LANDMARKS_IDXS["right_eye"]

Here we supply array slice indexes in order to extract the eye regions from the set of facial landmarks.

We’re now ready to start our video stream thread:

# start the video stream thread

print("[INFO] starting video stream thread...")

vs = VideoStream(src=0).start()

# vs = VideoStream(usePiCamera=True).start()

time.sleep(1.0)

If you are using the PiCamera module, be sure to comment out Line 74 and uncomment Line 75 to switch the video stream to the Raspberry Pi camera. Otherwise if you are using a USB camera, you can leave this unchanged.

We have one second sleep so the camera sensor can warm up.

From there let’s loop over the frames from the video stream:

# loop over frames from the video stream while True: # grab the frame from the threaded video file stream, resize # it, and convert it to grayscale # channels) frame = vs.read() frame = imutils.resize(frame, width=450) gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY) # detect faces in the grayscale frame rects = detector.detectMultiScale(gray, scaleFactor=1.1, minNeighbors=5, minSize=(30, 30), flags=cv2.CASCADE_SCALE_IMAGE)

The beginning of this loop should look familiar if you’ve read the previous post. We read a frame, resize it (for efficiency), and convert it to grayscale (Lines 83-85).

Then we detect faces in the grayscale image with our detector on Lines 88-90.

Now let’s loop over the detections:

# loop over the face detections for (x, y, w, h) in rects: # construct a dlib rectangle object from the Haar cascade # bounding box rect = dlib.rectangle(int(x), int(y), int(x + w), int(y + h)) # determine the facial landmarks for the face region, then # convert the facial landmark (x, y)-coordinates to a NumPy # array shape = predictor(gray, rect) shape = face_utils.shape_to_np(shape)

Line 93 begins a lengthy for-loop which is broken down into several code blocks here. First we extract the coordinates and width + height of the rects detections. Then, on Lines 96 and 97 we construct a dlib rectangle object using the information extracted from the Haar cascade bounding box.

From there, we determine the facial landmarks for the face region (Line 102) and convert the facial landmark (x, y)-coordinates to a NumPy array.

Given our NumPy array, shape , we can extract each eye’s coordinates and compute the EAR:

# extract the left and right eye coordinates, then use the # coordinates to compute the eye aspect ratio for both eyes leftEye = shape[lStart:lEnd] rightEye = shape[rStart:rEnd] leftEAR = eye_aspect_ratio(leftEye) rightEAR = eye_aspect_ratio(rightEye) # average the eye aspect ratio together for both eyes ear = (leftEAR + rightEAR) / 2.0

Utilizing the indexes of the eye landmarks, we can slice the shape array to obtain the (x, y)-coordinates each eye (Lines 107 and 108).

We then calculate the EAR for each eye on Lines 109 and 110.

Soukupová and Čech recommend averaging both eye aspect ratios together to obtain a better estimation (Line 113).

This next block is strictly for visualization purposes:

# compute the convex hull for the left and right eye, then # visualize each of the eyes leftEyeHull = cv2.convexHull(leftEye) rightEyeHull = cv2.convexHull(rightEye) cv2.drawContours(frame, [leftEyeHull], -1, (0, 255, 0), 1) cv2.drawContours(frame, [rightEyeHull], -1, (0, 255, 0), 1)

We can visualize each of the eye regions on our frame by using cv2.drawContours and supplying the cv2.convexHull calculation of each eye (Lines 117-120). These few lines are great for debugging our script but aren’t necessary if you are making an embedded product with no screen.

From there, we will check our Eye Aspect Ratio (ear ) and frame counter (COUNTER ) to see if the eyes are closed, while sounding the alarm to alert the drowsy driver if needed:

# check to see if the eye aspect ratio is below the blink # threshold, and if so, increment the blink frame counter if ear < EYE_AR_THRESH: COUNTER += 1 # if the eyes were closed for a sufficient number of # frames, then sound the alarm if COUNTER >= EYE_AR_CONSEC_FRAMES: # if the alarm is not on, turn it on if not ALARM_ON: ALARM_ON = True # check to see if the TrafficHat buzzer should # be sounded if args["alarm"] > 0: th.buzzer.blink(0.1, 0.1, 10, background=True) # draw an alarm on the frame cv2.putText(frame, "DROWSINESS ALERT!", (10, 30), cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2) # otherwise, the eye aspect ratio is not below the blink # threshold, so reset the counter and alarm else: COUNTER = 0 ALARM_ON = False

On Line 124 we check the ear against the EYE_AR_THRESH — if it is less than the threshold (eyes are closed), we increment our COUNTER (Line 125) and subsequently check it to see if the eyes have been closed for enough consecutive frames to sound the alarm (Line 129).

If the alarm isn’t on, we turn it on for a few seconds to wake up the drowsy driver. This is accomplished on Lines 136-138.

Optionally (if you’re implementing this code with a screen), you can draw the alarm on the frame as I have done on Lines 141 and 142.

That brings us to the case where the ear wasn’t less than the EYE_AR_THRESH — in this case we reset our COUNTER to 0 and make sure our alarm is turned off (Lines 146-148).

We’re almost done — in our last code block we’ll draw the EAR on the frame , display the frame , and do some cleanup:

# draw the computed eye aspect ratio on the frame to help

# with debugging and setting the correct eye aspect ratio

# thresholds and frame counters

cv2.putText(frame, "EAR: {:.3f}".format(ear), (300, 30),

cv2.FONT_HERSHEY_SIMPLEX, 0.7, (0, 0, 255), 2)

# show the frame

cv2.imshow("Frame", frame)

key = cv2.waitKey(1) & 0xFF

# if the `q` key was pressed, break from the loop

if key == ord("q"):

break

# do a bit of cleanup

cv2.destroyAllWindows()

vs.stop()

If you’re integrating with a screen or debugging you may wish to display the computed eye aspect ratio on the frame as I have done on Lines 153 and 154. The frame is displayed to the actual screen on Lines 157 and 158.

The program is stopped when the ‘q’ key is pressed on a keyboard (Lines 157 and 158).

You might be thinking, “I won’t have a keyboard hooked up in my car!” Well, if you’re debugging using your webcam and your computer at your desk, you certainly do. If you want to use the button on the TrafficHAT to turn on/off the drowsiness detection algorithm, that is perfectly fine — the first reader to post the solution in the comments to using the button to turn on and off the drowsiness detector with the Pi deserves an ice cold craft beer or a hot artisan coffee.

Finally, we clean up by closing any open windows and stopping the video stream (Lines 165 and 166).

Drowsiness detection results

To run this program on your own Raspberry Pi, be sure to use the “Downloads” section at the bottom of this post to grab the source code, face detection Haar cascade, and dlib facial landmark detector.

I didn’t have enough time to wire everything up in my car and record the screen while as I did previously. It would have been quite challenging to record the Raspberry Pi screen while driving as well.

Instead, I’ll demonstrate at my desk — you can then take this implementation and use it inside your own car for drowsiness detection as you see fit.

You can see an image of my setup below:

To run the program, simply execute the following command:

$ python pi_detect_drowsiness.py --cascade haarcascade_frontalface_default.xml \ --shape-predictor shape_predictor_68_face_landmarks.dat --alarm 1

I have included a video of myself demoing the real-time drowsiness detector on the Raspberry Pi below:

Our Raspberry Pi 3 is able to accurately determine if I’m getting “drowsy”. We were able to accomplish this using our optimized code.

Disclaimer: I do not advise that you rely upon the hobbyist Raspberry Pi and this code to keep you awake at the wheel if you are in fact drowsy while driving. The best thing to do is to pull over and rest; walk around; or have a coffee/soda. Have fun with this project and show it off to your friends, but do not risk your life or that of others.

How do I run this program automatically when the Pi boots up?

This is a common question I receive. I have a blog post covering the answer here: Running a Python + OpenCV script on reboot.

What's next? We recommend PyImageSearch University.

86+ total classes • 115+ hours hours of on-demand code walkthrough videos • Last updated: May 2026

★★★★★ 4.84 (128 Ratings) • 16,000+ Students Enrolled

I strongly believe that if you had the right teacher you could master computer vision and deep learning.

Do you think learning computer vision and deep learning has to be time-consuming, overwhelming, and complicated? Or has to involve complex mathematics and equations? Or requires a degree in computer science?

That’s not the case.

All you need to master computer vision and deep learning is for someone to explain things to you in simple, intuitive terms. And that’s exactly what I do. My mission is to change education and how complex Artificial Intelligence topics are taught.

If you're serious about learning computer vision, your next stop should be PyImageSearch University, the most comprehensive computer vision, deep learning, and OpenCV course online today. Here you’ll learn how to successfully and confidently apply computer vision to your work, research, and projects. Join me in computer vision mastery.

Inside PyImageSearch University you'll find:

- ✓ 86+ courses on essential computer vision, deep learning, and OpenCV topics

- ✓ 86 Certificates of Completion

- ✓ 115+ hours hours of on-demand video

- ✓ Brand new courses released regularly, ensuring you can keep up with state-of-the-art techniques

- ✓ Pre-configured Jupyter Notebooks in Google Colab

- ✓ Run all code examples in your web browser — works on Windows, macOS, and Linux (no dev environment configuration required!)

- ✓ Access to centralized code repos for all 540+ tutorials on PyImageSearch

- ✓ Easy one-click downloads for code, datasets, pre-trained models, etc.

- ✓ Access on mobile, laptop, desktop, etc.

Summary

In today’s blog post, we learned how to optimize facial landmarks on the Raspberry Pi by swapping out a HOG + Linear SVM-based face detector for a Haar cascade.

Haar cascades, while less accurate, are significantly faster than HOG + Linear SVM detectors.

Given the detections from the Haar cascade we were able to construct a dlib.rectangle object corresponding to the bounding box (x, y)-coordinates in the image. This object was fed into dlib’s facial landmark predictor which in turn gives us the set of localized facial landmarks on the face. From there, we applied the same algorithm we used in our previous post to detect drowsiness in a video stream.

I hope you enjoyed this tutorial!

To be notified when new blog posts are published here on the PyImageSearch blog, be sure to enter your email address in the form below — I’ll be sure to notify you when new content is released!

Download the Source Code and FREE 17-page Resource Guide

Enter your email address below to get a .zip of the code and a FREE 17-page Resource Guide on Computer Vision, OpenCV, and Deep Learning. Inside you'll find my hand-picked tutorials, books, courses, and libraries to help you master CV and DL!