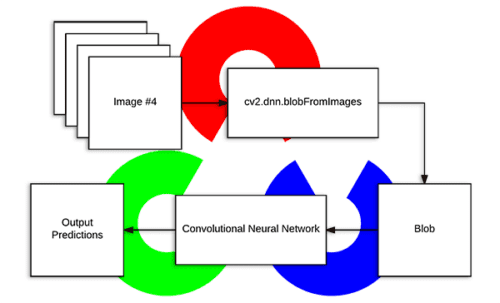

Today’s blog post is inspired by a number of PyImageSearch readers who have commented on previous deep learning tutorials wanting to understand what exactly OpenCV’s blobFromImage function is doing under the hood.

You see, to obtain (correct) predictions from deep neural networks you first need to preprocess your data.

In the context of deep learning and image classification, these preprocessing tasks normally involve:

- Mean subtraction

- Scaling by some factor

OpenCV’s new deep neural network (dnn ) module contains two functions that can be used for preprocessing images and preparing them for classification via pre-trained deep learning models.

In today’s blog post we are going to take apart OpenCV’s cv2.dnn.blobFromImage and cv2.dnn.blobFromImages preprocessing functions and understand how they work.

To learn more about image preprocessing for deep learning via OpenCV, just keep reading.

Deep learning: How OpenCV’s blobFromImage works

OpenCV provides two functions to facilitate image preprocessing for deep learning classification:

cv2.dnn.blobFromImagecv2.dnn.blobFromImages

These two functions perform

- Mean subtraction

- Scaling

- And optionally channel swapping

In the remainder of this tutorial we’ll:

- Explore mean subtraction and scaling

- Examine the function signature of each deep learning preprocessing function

- Study these methods in detail

- And finally, apply OpenCV’s deep learning functions to a set of input images

Let’s go ahead and get started.

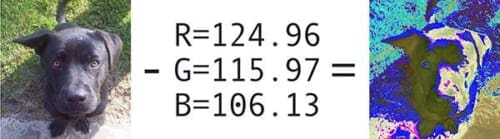

Deep learning and mean subtraction

Before we dive into an explanation of OpenCV’s deep learning preprocessing functions, we first need to understand mean subtraction. Mean subtraction is used to help combat illumination changes in the input images in our dataset. We can therefore view mean subtraction as a technique used to aid our Convolutional Neural Networks.

Before we even begin training our deep neural network, we first compute the average pixel intensity across all images in the training set for each of the Red, Green, and Blue channels.

This implies that we end up with three variables:

,

, and

Typically the resulting values are a 3-tuple consisting of the mean of the Red, Green, and Blue channels, respectively.

For example, the mean values for the ImageNet training set are R=103.93, G=116.77, and B=123.68 (you may have already encountered these values before if you have used a network that was pre-trained on ImageNet).

However, in some cases the mean Red, Green, and Blue values may be computed channel-wise rather than pixel-wise, resulting in an MxN matrix. In this case the MxN matrix for each channel is then subtracted from the input image during training/testing.

Both methods are perfectly valid forms of mean subtraction; however, we tend to see the pixel-wise version used more often, especially for larger datasets.

When we are ready to pass an image through our network (whether for training or testing), we subtract the mean, , from each input channel of the input image:

We may also have a scaling factor, , which adds in a normalization:

The value of may be the standard deviation across the training set (thereby turning the preprocessing step into a standard score/z-score). However,

may also be manually set (versus calculated) to scale the input image space into a particular range — it really depends on the architecture, how the network was trained, and the techniques the implementing author is familiar with.

It’s important to note that not all deep learning architectures perform mean subtraction and scaling! Before you preprocess your images, be sure to read the relevant publication/documentation for the deep neural network you are using.

As you’ll find on your deep learning journey, some architectures perform mean subtraction only (thereby setting ). Other architectures perform both mean subtraction and scaling. Even other architectures choose to perform no mean subtraction or scaling. Always check the relevant publication you are implementing/using to verify the techniques the author is using.

Mean subtraction, scaling, and normalization are covered in more detail inside Deep Learning for Computer Vision with Python.

OpenCV’s blobFromImage and blobFromImages function

Let’s start off by referring to the official OpenCV documentation for cv2.dnn.blobFromImage :

[blobFromImage] creates 4-dimensional blob from image. Optionally resizes and crops

imagefrom center, subtractmeanvalues, scales values byscalefactor, swap Blue and Red channels.

Informally, a blob is just a (potentially collection) of image(s) with the same spatial dimensions (i.e., width and height), same depth (number of channels), that have all be preprocessed in the same manner.

The cv2.dnn.blobFromImage and cv2.dnn.blobFromImages functions are near identical.

Let’s start with examining the cv2.dnn.blobFromImage function signature below:

blob = cv2.dnn.blobFromImage(image, scalefactor=1.0, size, mean, swapRB=True)

I’ve provided a discussion of each parameter below:

image: This is the input image we want to preprocess before passing it through our deep neural network for classification.scalefactor: After we perform mean subtraction we can optionally scale our images by some factor. This value defaults to `1.0` (i.e., no scaling) but we can supply another value as well. It’s also important to note thatscalefactorshould beas we’re actually multiplying the input channels (after mean subtraction) by

scalefactor.size: Here we supply the spatial size that the Convolutional Neural Network expects. For most current state-of-the-art neural networks this is either 224×224, 227×227, or 299×299.mean: These are our mean subtraction values. They can be a 3-tuple of the RGB means or they can be a single value in which case the supplied value is subtracted from every channel of the image. If you’re performing mean subtraction, ensure you supply the 3-tuple in `(R, G, B)` order, especially when utilizing the default behavior ofswapRB=True.swapRB: OpenCV assumes images are in BGR channel order; however, the `mean` value assumes we are using RGB order. To resolve this discrepancy we can swap the R and B channels inimageby setting this value to `True`. By default OpenCV performs this channel swapping for us.

The cv2.dnn.blobFromImage function returns a blob which is our input image after mean subtraction, normalizing, and channel swapping.

The cv2.dnn.blobFromImages function is exactly the same:

blob = cv2.dnn.blobFromImages(images, scalefactor=1.0, size, mean, swapRB=True)

The only exception is that we can pass in multiple images, enabling us to batch process a set of images .

If you’re processing multiple images/frames, be sure to use the cv2.dnn.blobFromImages function as there is less function call overhead and you’ll be able to batch process the images/frames faster.

Deep learning with OpenCV’s blobFromImage function

Now that we’ve studied both the blobFromImage and blobFromImages functions, let’s apply them to a few example images and then pass them through a Convolutional Neural Network for classification.

As a prerequisite, you need OpenCV version 3.3.0 at a minimum. NumPy is a dependency of OpenCV’s Python bindings and imutils is my package of convenience functions available on GitHub and in the Python Package Index.

If you haven’t installed OpenCV, you’ll want to follow the latest tutorials available here, and be sure to specify OpenCV 3.3.0 or higher when you clone/download opencv and opencv_contrib .

The imutils package can be installed via pip :

$ pip install imutils

Assuming your image processing environment is ready to go, let’s open up a new file, name it blob_from_images.py , and insert the following code:

# import the necessary packages

from imutils import paths

import numpy as np

import cv2

# load the class labels from disk

rows = open("synset_words.txt").read().strip().split("\n")

classes = [r[r.find(" ") + 1:].split(",")[0] for r in rows]

# load our serialized model from disk

net = cv2.dnn.readNetFromCaffe("bvlc_googlenet.prototxt",

"bvlc_googlenet.caffemodel")

# grab the paths to the input images

imagePaths = sorted(list(paths.list_images("images/")))

First we import imutils , numpy , and cv2 (Lines 2-4).

Then we read synset_words.txt (the ImageNet Class labels) and extract classes , our class labels, on Lines 7 and 8.

To load our model model from disk we use the DNN function, cv2.dnn.readNetFromCaffe , and specify bvlc_googlenet.prototxt as the filename parameter and bvlc_googlenet.caffemodel as the actual model file (Lines 11 and 12).

Note: You can grab the pre-trained Convolutional Neural Network, class labels text file, source code, and example images to this post using the “Downloads” section at the bottom of this tutorial.

Finally, we grab the paths to the input images on Line 15. If you’re using Windows you should change the path separator here to ensure you can correctly load the image paths.

Next, we’ll load images from disk and pre-process them using blobFromImage :

# (1) load the first image from disk, (2) pre-process it by resizing

# it to 224x224 pixels, and (3) construct a blob that can be passed

# through the pre-trained network

image = cv2.imread(imagePaths[0])

resized = cv2.resize(image, (224, 224))

blob = cv2.dnn.blobFromImage(resized, 1, (224, 224), (104, 117, 123))

print("First Blob: {}".format(blob.shape))

In this block, we first load the image (Line 20) and then resize it to 224×224 (Line 21), the required input image dimensions for GoogLeNet.

Now we’re to the crux of this post.

On Line 22, we call cv2.dnn.blobFromImage which, as stated in the previous section, will create a 4-dimensional blob for use in our neural net.

Let’s print the shape of our blob so we can analyze it in the terminal later (Line 23).

Next, we’ll feed blob through GoogLeNet:

# set the input to the pre-trained deep learning network and obtain

# the output predicted probabilities for each of the 1,000 ImageNet

# classes

net.setInput(blob)

preds = net.forward()

# sort the probabilities (in descending) order, grab the index of the

# top predicted label, and draw it on the input image

idx = np.argsort(preds[0])[::-1][0]

text = "Label: {}, {:.2f}%".format(classes[idx],

preds[0][idx] * 100)

cv2.putText(image, text, (5, 25), cv2.FONT_HERSHEY_SIMPLEX,

0.7, (0, 0, 255), 2)

# show the output image

cv2.imshow("Image", image)

cv2.waitKey(0)

If you’re familiar with recent deep learning posts on this blog, the above lines should look familiar.

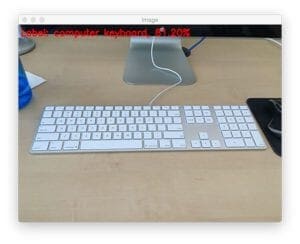

We feed the blob through the network (Lines 28 and 29) and grab the predictions, preds .

Then we sort preds (Line 33) with the most confident predictions at the front of the list, and generate a label text to display on the image. The label text consists of the class label and the prediction percentage value for the top prediction (Lines 34 and 35).

From there, we write the label text at the top of the image (Lines 36 and 37) followed by displaying the image on the screen and waiting for a keypress before moving on (Lines 40 and 41).

Now it’s time to use the plural form of the blobFromImage function.

Here we’ll do (nearly) the same thing, except we’ll instead create and populate a list of images followed by passing the list as a parameter to blobFromImages :

# initialize the list of images we'll be passing through the network

images = []

# loop over the input images (excluding the first one since we

# already classified it), pre-process each image, and update the

# `images` list

for p in imagePaths[1:]:

image = cv2.imread(p)

image = cv2.resize(image, (224, 224))

images.append(image)

# convert the images list into an OpenCV-compatible blob

blob = cv2.dnn.blobFromImages(images, 1, (224, 224), (104, 117, 123))

print("Second Blob: {}".format(blob.shape))

First we initialize our images list (Line 44), and then, using the imagePaths , we read, resize, and append the image to the list (Lines 49-52).

Using list slicing, we’ve omitted the first image from imagePaths on Line 49.

From there, we pass the images into cv2.dnn.blobFromImages as the first parameter on Line 55. All other parameters to cv2.dnn.blobFromImages are identical to cv2.dnn.blobFromImage above.

For analysis later we print blob.shape on Line 56.

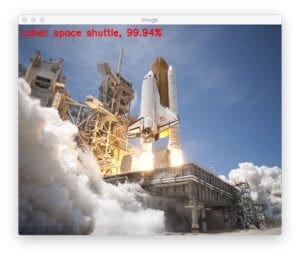

We’ll next pass the blob through GoogLeNet and write the class label and prediction at the top of each image:

# set the input to our pre-trained network and obtain the output

# class label predictions

net.setInput(blob)

preds = net.forward()

# loop over the input images

for (i, p) in enumerate(imagePaths[1:]):

# load the image from disk

image = cv2.imread(p)

# find the top class label from the `preds` list and draw it on

# the image

idx = np.argsort(preds[i])[::-1][0]

text = "Label: {}, {:.2f}%".format(classes[idx],

preds[i][idx] * 100)

cv2.putText(image, text, (5, 25), cv2.FONT_HERSHEY_SIMPLEX,

0.7, (0, 0, 255), 2)

# display the output image

cv2.imshow("Image", image)

cv2.waitKey(0)

The remaining code is essentially the same as above, only our for loop now handles looping through each of the imagePaths (again, omitting the first one as we have already classified it).

And that’s it! Let’s see the script in action in the next section.

OpenCV blobfromImage and blobFromImages results

Now we’ve reached the fun part.

Go ahead and use the “Downloads” section of this blog post to download the source code, example images, and pre-trained neural network. You will need the additional files in order to execute the code.

From there, fire up a terminal and run the following command:

$ python blob_from_images.py

The first terminal output is with respect to the first image found in the images folder where we apply the cv2.dnn.blobFromImage function:

First Blob: (1, 3, 224, 224)

The resulting beer glass image is displayed on the screen:

blobFromImage are displayed in the terminal.That full beer glass makes me thirsty. But before I enjoy a beer myself, I’ll explain why the shape of the blob is (1, 3, 224, 224) .

The resulting tuple has the following format:

(num_images=1, num_channels=3, width=224, height=224)

Since we’ve only processed one image, we only have one entry in our blob . The channel count is three for BGR channels. And finally 224×224 is the spatial width and height for our input image.

Next, let’s build a blob from the remaining four input images.

The second blob’s shape is:

Second Blob: (4, 3, 224, 224)

Since this blob contains 4 images, the num_images=4 . The remaining dimensions are the same as the first, single image, blob.

I’ve included a sample of correctly classified images below:

What's next? We recommend PyImageSearch University.

86+ total classes • 115+ hours hours of on-demand code walkthrough videos • Last updated: May 2026

★★★★★ 4.84 (128 Ratings) • 16,000+ Students Enrolled

I strongly believe that if you had the right teacher you could master computer vision and deep learning.

Do you think learning computer vision and deep learning has to be time-consuming, overwhelming, and complicated? Or has to involve complex mathematics and equations? Or requires a degree in computer science?

That’s not the case.

All you need to master computer vision and deep learning is for someone to explain things to you in simple, intuitive terms. And that’s exactly what I do. My mission is to change education and how complex Artificial Intelligence topics are taught.

If you're serious about learning computer vision, your next stop should be PyImageSearch University, the most comprehensive computer vision, deep learning, and OpenCV course online today. Here you’ll learn how to successfully and confidently apply computer vision to your work, research, and projects. Join me in computer vision mastery.

Inside PyImageSearch University you'll find:

- ✓ 86+ courses on essential computer vision, deep learning, and OpenCV topics

- ✓ 86 Certificates of Completion

- ✓ 115+ hours hours of on-demand video

- ✓ Brand new courses released regularly, ensuring you can keep up with state-of-the-art techniques

- ✓ Pre-configured Jupyter Notebooks in Google Colab

- ✓ Run all code examples in your web browser — works on Windows, macOS, and Linux (no dev environment configuration required!)

- ✓ Access to centralized code repos for all 540+ tutorials on PyImageSearch

- ✓ Easy one-click downloads for code, datasets, pre-trained models, etc.

- ✓ Access on mobile, laptop, desktop, etc.

Summary

In today’s tutorial we examined OpenCV’s blobFromImage and blobFromImages deep learning functions.

These methods are used to prepare input images for classification via pre-trained deep learning models.

Both blobFromImage and blobFromImages perform mean subtraction and scaling. We can also swap the Red and Blue channels of the image depending on channel ordering. Nearly all state-of-the-art deep learning models perform mean subtraction and scaling — the benefit here is that OpenCV makes these preprocessing tasks dead simple.

If you’re interested in studying deep learning in more detail, be sure to take a look at my brand new book, Deep Learning for Computer Vision with Python.

Inside the book you’ll discover:

- Super practical walkthroughs that present solutions to actual, real-world image classification problems, challenges, and competitions.

- Detailed, thorough experiments (with highly documented code) enabling you to reproduce state-of-the-art results.

- My favorite “best practices” to improve network accuracy. These techniques alone will save you enough time to pay for the book multiple times over.

- ..and much more!

Sound good?

Click here to start your journey to deep learning mastery.

Otherwise, be sure to enter your email address in the form below to be notified when future deep learning tutorials are published here on the PyImageSearch blog.

See you next week!

Download the Source Code and FREE 17-page Resource Guide

Enter your email address below to get a .zip of the code and a FREE 17-page Resource Guide on Computer Vision, OpenCV, and Deep Learning. Inside you'll find my hand-picked tutorials, books, courses, and libraries to help you master CV and DL!