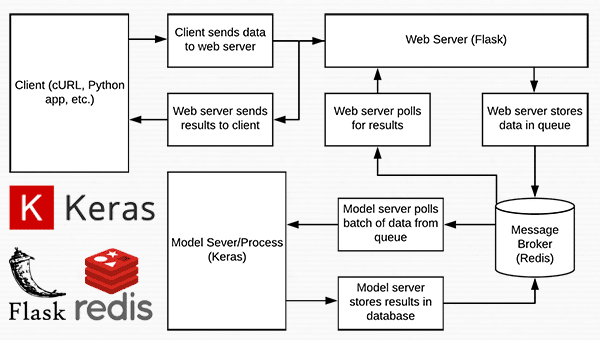

Shipping deep learning models to production is a non-trivial task.

If you don’t believe me, take a second and look at the “tech giants” such as Amazon, Google, Microsoft, etc. — nearly all of them provide some method to ship your machine learning/deep learning models to production in the cloud.

Going with a model deployment service is perfectly fine and acceptable…but what if you wanted to own the entire process and not rely on external services?

This type of situation is more common than you may think. Consider:

- An in-house project where you cannot move sensitive data outside your network

- A project that specifies that the entire infrastructure must reside within the company

- A government organization that needs a private cloud

- A startup that is in “stealth mode” and needs to stress test their service/application in-house

How would you go about shipping your deep learning models to production in these situations, and perhaps most importantly, making it scalable at the same time?

Today’s post is the final chapter in our three part series on building a deep learning model server REST API:

- Part one (which was posted on the official Keras.io blog!) is a simple Keras + deep learning REST API which is intended for single threaded use with no concurrent requests. This method is a perfect fit if this is your first time building a deep learning web server or if you’re working on a home/hobby project.

- In part two we demonstrated how to leverage Redis along with message queueing/message brokering paradigms to efficiently batch process incoming inference requests (but with a small caveat on server threading that could cause problems).

- In the final part of this series, I’ll show you how to resolve these server threading issues, further scale our method, provide benchmarks, and demonstrate how to efficiently scale deep learning in production using Keras, Redis, Flask, and Apache.

As the results of our stress test will demonstrate, our single GPU machine can easily handle 500 concurrent requests (0.05 second delay in between each one) without ever breaking a sweat — this performance continues to scale as well.

To learn how to ship your own deep learning models to production using Keras, Redis, Flask, and Apache, just keep reading.

Deep learning in production with Keras, Redis, Flask, and Apache

2020-06-16 Update: This blog post is now TensorFlow 2+ compatible!

The code for this blog post is primarily based on our previous post, but with some minor modifications — the first part of today’s guide will review these changes along with our project structure.

From there, we’ll move on to configuring our deep learning web application, including installing and configuring any packages you may need (Redis, Apache, etc.).

Finally, we’ll stress test our server and benchmark our results.

For a quick overview of our deep learning production system (including a demo) be sure to watch the video above!

Our deep learning project structure

Our project structure is as follows:

├── helpers.py ├── jemma.png ├── keras_rest_api_app.wsgi ├── run_model_server.py ├── run_web_server.py ├── settings.py ├── simple_request.py └── stress_test.py

Let’s review the important files:

run_web_server.pycontains all our Flask web server code — Apache will load this when starting our deep learning web app.run_model_server.pywill:- Load our Keras model from disk

- Continually poll Redis for new images to classify

- Classify images (batch processing them for efficiency)

- Write the inference results back to Redis so they can be returned to the client via Flask

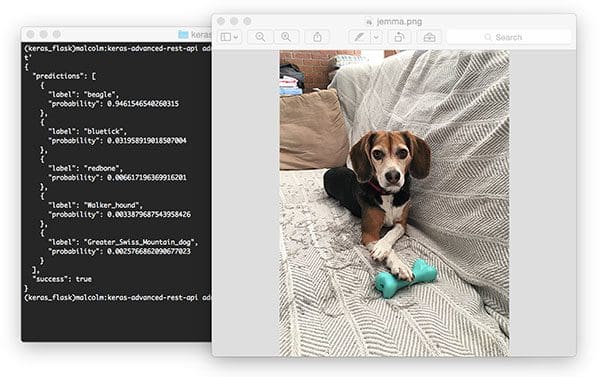

settings.pycontains all Python-based settings for our deep learning productions service, such as Redis host/port information, image classification settings, image queue name, etc.helpers.pycontains utility functions that bothrun_web_server.pyandrun_model_server.pywill use (namelybase64encoding).keras_rest_api_app.wsgicontains our WSGI settings so we can serve the Flask app from our Apache server.simple_request.pycan be used to programmatically consume the results of our deep learning API service.jemma.pngis a photo of my family’s beagle. We’ll be using her as an example image when calling the REST API to validate it is indeed working.- Finally, we’ll use

stress_test.pyto stress our server and measure image classification throughout.

As described last week, we have a single endpoint on our Flask server, /predict . This method lives in run_web_server.py and will compute the classification for an input image on demand. Image pre-processing is also handled in run_web_server.py .

In order to make our server production-ready, I’ve pulled out the classify_process function from last week’s single script and placed it in run_model_server.py . This script is very important as it will load our Keras model and grab images from our image queue in Redis for classification. Results are written back to Redis (the /predict endpoint and corresponding function in run_web_server.py monitors Redis for results to send back to the client).

But what good is a deep learning REST API server unless we know its capabilities and limitations?

In stress_test.py , we test our server. We’ll accomplish this by kicking off 500 concurrent threads which will send our images to the server for classification in parallel. I recommend running this on the server localhost to start, and then running it from a client that is off site.

Building our deep learning web app

Nearly every single line of code used in this project comes from our previous post on building a scalable deep learning REST API — the only change is that we are moving some of the code to separate files to facilitate scalability in a production environment.

As a matter of completeness I’ll be including the source code to each file in this blog post (and in the “Downloads” section of this blog post). For a detailed review of the files, please see the previous post.

Settings and configurations

# initialize Redis connection settings REDIS_HOST = "localhost" REDIS_PORT = 6379 REDIS_DB = 0 # initialize constants used to control image spatial dimensions and # data type IMAGE_WIDTH = 224 IMAGE_HEIGHT = 224 IMAGE_CHANS = 3 IMAGE_DTYPE = "float32" # initialize constants used for server queuing IMAGE_QUEUE = "image_queue" BATCH_SIZE = 32 SERVER_SLEEP = 0.25 CLIENT_SLEEP = 0.25

In settings.py you’ll be able to change parameters for the server connectivity, image dimensions + data type, and server queuing.

Helper utilities

# import the necessary packages

import numpy as np

import base64

import sys

def base64_encode_image(a):

# base64 encode the input NumPy array

return base64.b64encode(a).decode("utf-8")

def base64_decode_image(a, dtype, shape):

# if this is Python 3, we need the extra step of encoding the

# serialized NumPy string as a byte object

if sys.version_info.major == 3:

a = bytes(a, encoding="utf-8")

# convert the string to a NumPy array using the supplied data

# type and target shape

a = np.frombuffer(base64.decodestring(a), dtype=dtype)

a = a.reshape(shape)

# return the decoded image

return a

The helpers.py file contains two functions — one for base64 encoding and the other for decoding.

Encoding is necessary so that we can serialize + store our image in Redis. Likewise, decoding is necessary so that we can deserialize the image into NumPy array format prior to pre-processing.

The deep learning web server

# import the necessary packages

from tensorflow.keras.preprocessing.image import img_to_array

from tensorflow.keras.applications.resnet50 import preprocess_input

from PIL import Image

import numpy as np

import settings

import helpers

import flask

import redis

import uuid

import time

import json

import io

# initialize our Flask application and Redis server

app = flask.Flask(__name__)

db = redis.StrictRedis(host=settings.REDIS_HOST,

port=settings.REDIS_PORT, db=settings.REDIS_DB)

def prepare_image(image, target):

# if the image mode is not RGB, convert it

if image.mode != "RGB":

image = image.convert("RGB")

# resize the input image and preprocess it

image = image.resize(target)

image = img_to_array(image)

image = np.expand_dims(image, axis=0)

image = preprocess_input(image)

# return the processed image

return image

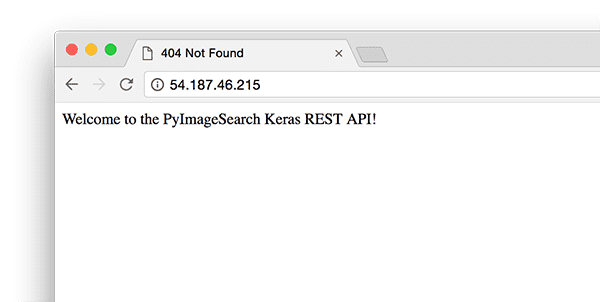

@app.route("/")

def homepage():

return "Welcome to the PyImageSearch Keras REST API!"

@app.route("/predict", methods=["POST"])

def predict():

# initialize the data dictionary that will be returned from the

# view

data = {"success": False}

# ensure an image was properly uploaded to our endpoint

if flask.request.method == "POST":

if flask.request.files.get("image"):

# read the image in PIL format and prepare it for

# classification

image = flask.request.files["image"].read()

image = Image.open(io.BytesIO(image))

image = prepare_image(image,

(settings.IMAGE_WIDTH, settings.IMAGE_HEIGHT))

# ensure our NumPy array is C-contiguous as well,

# otherwise we won't be able to serialize it

image = image.copy(order="C")

# generate an ID for the classification then add the

# classification ID + image to the queue

k = str(uuid.uuid4())

image = helpers.base64_encode_image(image)

d = {"id": k, "image": image}

db.rpush(settings.IMAGE_QUEUE, json.dumps(d))

# keep looping until our model server returns the output

# predictions

while True:

# attempt to grab the output predictions

output = db.get(k)

# check to see if our model has classified the input

# image

if output is not None:

# add the output predictions to our data

# dictionary so we can return it to the client

output = output.decode("utf-8")

data["predictions"] = json.loads(output)

# delete the result from the database and break

# from the polling loop

db.delete(k)

break

# sleep for a small amount to give the model a chance

# to classify the input image

time.sleep(settings.CLIENT_SLEEP)

# indicate that the request was a success

data["success"] = True

# return the data dictionary as a JSON response

return flask.jsonify(data)

# for debugging purposes, it's helpful to start the Flask testing

# server (don't use this for production

if __name__ == "__main__":

print("* Starting web service...")

app.run()

Here in run_web_server.py , you’ll see predict , the function associated with our REST API /predict endpoint.

The predict function pushes the encoded image into the Redis queue and then continually loops/polls until it obains the prediction data back from the model server. We then JSON-encode the data and instruct Flask to send the data back to the client.

The deep learning model server

# import the necessary packages

from tensorflow.keras.applications import ResNet50

from tensorflow.keras.applications.resnet50 import decode_predictions

import numpy as np

import settings

import helpers

import redis

import time

import json

# connect to Redis server

db = redis.StrictRedis(host=settings.REDIS_HOST,

port=settings.REDIS_PORT, db=settings.REDIS_DB)

def classify_process():

# load the pre-trained Keras model (here we are using a model

# pre-trained on ImageNet and provided by Keras, but you can

# substitute in your own networks just as easily)

print("* Loading model...")

model = ResNet50(weights="imagenet")

print("* Model loaded")

# continually pool for new images to classify

while True:

# attempt to grab a batch of images from the database, then

# initialize the image IDs and batch of images themselves

queue = db.lrange(settings.IMAGE_QUEUE, 0,

settings.BATCH_SIZE - 1)

imageIDs = []

batch = None

# loop over the queue

for q in queue:

# deserialize the object and obtain the input image

q = json.loads(q.decode("utf-8"))

image = helpers.base64_decode_image(q["image"],

settings.IMAGE_DTYPE,

(1, settings.IMAGE_HEIGHT, settings.IMAGE_WIDTH,

settings.IMAGE_CHANS))

# check to see if the batch list is None

if batch is None:

batch = image

# otherwise, stack the data

else:

batch = np.vstack([batch, image])

# update the list of image IDs

imageIDs.append(q["id"])

# check to see if we need to process the batch

if len(imageIDs) > 0:

# classify the batch

print("* Batch size: {}".format(batch.shape))

preds = model.predict(batch)

results = decode_predictions(preds)

# loop over the image IDs and their corresponding set of

# results from our model

for (imageID, resultSet) in zip(imageIDs, results):

# initialize the list of output predictions

output = []

# loop over the results and add them to the list of

# output predictions

for (imagenetID, label, prob) in resultSet:

r = {"label": label, "probability": float(prob)}

output.append(r)

# store the output predictions in the database, using

# the image ID as the key so we can fetch the results

db.set(imageID, json.dumps(output))

# remove the set of images from our queue

db.ltrim(settings.IMAGE_QUEUE, len(imageIDs), -1)

# sleep for a small amount

time.sleep(settings.SERVER_SLEEP)

# if this is the main thread of execution start the model server

# process

if __name__ == "__main__":

classify_process()

The run_model_server.py file houses our classify_process function. This function loads our model and then runs predictions on a batch of images. This process is ideally excuted on a GPU, but a CPU can also be used.

In this example, for sake of simplicity, we’ll be using ResNet50 pre-trained on the ImageNet dataset. You can modify classify_process to utilize your own deep learning models.

The WSGI configuration

# add our app to the system path import sys sys.path.insert(0, "/var/www/html/keras-complete-rest-api") # import the application and away we go... from run_web_server import app as application

Our next file, keras_rest_api_app.wsgi , is a new component to our deep learning REST API compared to last week.

This WSGI configuration file adds our server directory to the system path and imports the web app to kick off all the action. We point to this file in our Apache server settings file, /etc/apache2/sites-available/000-default.conf , later in this blog post.

The stress test

# import the necessary packages

from threading import Thread

import requests

import time

# initialize the Keras REST API endpoint URL along with the input

# image path

KERAS_REST_API_URL = "http://localhost/predict"

IMAGE_PATH = "jemma.png"

# initialize the number of requests for the stress test along with

# the sleep amount between requests

NUM_REQUESTS = 500

SLEEP_COUNT = 0.05

def call_predict_endpoint(n):

# load the input image and construct the payload for the request

image = open(IMAGE_PATH, "rb").read()

payload = {"image": image}

# submit the request

r = requests.post(KERAS_REST_API_URL, files=payload).json()

# ensure the request was sucessful

if r["success"]:

print("[INFO] thread {} OK".format(n))

# otherwise, the request failed

else:

print("[INFO] thread {} FAILED".format(n))

# loop over the number of threads

for i in range(0, NUM_REQUESTS):

# start a new thread to call the API

t = Thread(target=call_predict_endpoint, args=(i,))

t.daemon = True

t.start()

time.sleep(SLEEP_COUNT)

# insert a long sleep so we can wait until the server is finished

# processing the images

time.sleep(300)

Our stress_test.py script will help us to test the server and determine its limitations. I always recommend stress testing your deep learning REST API server so that you know if (and more importantly, when) you need to add additional GPUs, CPUs, or RAM. This script kicks off NUM_REQUESTS threads and POSTs to the /predict endpoint. It’s up to our Flask web app from there.

Configuring our deep learning production environment

This section will discuss how to install and configure the necessary prerequisites for our deep learning API server.

We’ll use my PyImageSearch Deep Learning AMI (freely available to you to use) as a base. I chose a p2.xlarge instance with a single GPU for this example.

You can modify the code in this example to leverage multiple GPUs as well by:

- Running multiple model server processes

- Maintaining an image queue for each GPU and corresponding model process

However, keep in mind that your machine will still be limited by I/O. It may be beneficial to instead utilize multiple machines, each with 1-4 GPUs than trying to scale to 8 or 16 GPUs on a single machine.

Compile and installing Redis

Redis, an efficient in-memory database, will act as our queue/message broker.

Obtaining and installing Redis is very easy:

$ wget http://download.redis.io/redis-stable.tar.gz $ tar xvzf redis-stable.tar.gz $ cd redis-stable $ make $ sudo make install

Create your deep learning Python virtual environment

Be sure to install virtualenv and virtualenvwrapper to manage Python virtual environments on your system. You can follow either of these guides:

Please note that PyImageSearch does not recommend or support Windows for CV/DL projects.

After following those instructions, you’ll have a Python 3 virtual environment named dl4cv.

From there, you’ll need the following additional packages:

$ workon dl4cv $ pip install flask $ pip install gevent $ pip install requests $ pip install redis

Install the Apache web server

Other web servers can be used such as nginx but since I have more experience with Apache (and therefore more familiar with Apache in general), I’ll be using Apache for this example.

Apache can be installed via:

$ sudo apt-get install apache2

If you’ve created a virtual environment using Python 3 you’ll want to install the Python 3 WSGI + Apache module:

$ sudo apt-get install libapache2-mod-wsgi-py3 $ sudo a2enmod wsgi

To validate that Apache is installed, open up a browser and enter the IP address of your web server. If you can’t see the server splash screen then be sure to open up Port 80 and Port 5000.

In my case, the IP address of my server is 54.187.46.215 (yours will be different). Entering this in a browser I see:

…which is the default Apache homepage.

Sym-link your Flask + deep learning app

By default, Apache serves content from /var/www/html . I would recommend creating a sym-link from /var/www/html to your Flask web app.

I have uploaded my deep learning + Flask app to my home directory in a directory named keras-complete-rest-api :

$ ls ~ keras-complete-rest-api

I can sym-link it to /var/www/html via:

$ cd /var/www/html/ $ sudo ln -s ~/keras-complete-rest-api keras-complete-rest-api

Update your Apache configuration to point to the Flask app

In order to configure Apache to point to our Flask app, we need to edit /etc/apache2/sites-available/000-default.conf .

Open in your favorite text editor (here I’ll be using vi ):

$ sudo vi /etc/apache2/sites-available/000-default.conf

At the top of the file supply your WSGIPythonHome (path to Python bin directory) and WSGIPythonPath (path to Python site-packages directory) configurations:

WSGIPythonHome /home/ubuntu/.virtualenvs/keras_flask/bin WSGIPythonPath /home/ubuntu/.virtualenvs/keras_flask/lib/python3.5/site-packages <VirtualHost *:80> ... </VirtualHost>

2020-06-18 Update: On Ubuntu 18.04, you may need to change the first line to:

WSGIPythonHome /home/ubuntu/.virtualenvs/keras_flask

… where /bin is eliminated.

Since we are using Python virtual environments in this example (I have named my virtual environment keras_flask ), we supply the path to the bin and site-packages directory for the Python virtual environment.

Then in body of <VirtualHost> , right after ServerAdmin and DocumentRoot , add:

<VirtualHost *:80>

...

WSGIDaemonProcess keras_rest_api_app threads=10

WSGIScriptAlias / /var/www/html/keras-complete-rest-api/keras_rest_api_app.wsgi

<Directory /var/www/html/keras-complete-rest-api>

WSGIProcessGroup keras_rest_api_app

WSGIApplicationGroup %{GLOBAL}

Order deny,allow

Allow from all

</Directory>

...

</VirtualHost>

Sym-link CUDA libraries (optional, GPU only)

If you’re using your GPU for deep learning and want to leverage CUDA (and why wouldn’t you), Apache unfortunately has no knowledge of CUDA’s *.so libraries in /usr/local/cuda/lib64 .

I’m not sure what the “most correct” way instruct to Apache of where these CUDA libraries live, but the “total hack” solution is to sym-link all files from /usr/local/cuda/lib64 to /usr/lib :

$ cd /usr/lib $ sudo ln -s /usr/local/cuda/lib64/* ./

If there is a better way to make Apache aware of the CUDA libraries, please let me know in the comments.

Restart the Apache web server

Once you’ve edited your Apache configuration file and optionally sym-linked the CUDA deep learning libraries, be sure to restart your Apache server via:

$ sudo service apache2 restart

Testing your Apache web server + deep learning endpoint

To test that Apache is properly configured to deliver your Flask + deep learning app, refresh your web browser:

You should now see the text “Welcome to the PyImageSearch Keras REST API!” in your browser.

Once you’ve reached this stage your Flask deep learning app should be ready to go.

All that said, if you run into any problems make sure you refer to the next section…

TIP: Monitor your Apache error logs if you run into trouble

I’ve been using Python + web frameworks such as Flask and Django for years and I still make mistakes when getting my environment configured properly.

While I wish there was a bullet proof way to make sure everything works out of the gate, the truth is something is likely going to gum up the works along the way.

The good news is that WSGI logs Python events, including failures, to the server log.

On Ubuntu, the Apache server log is located in /var/log/apache2/ :

$ ls /var/log/apache2 access.log error.log other_vhosts_access.log

When debugging, I often keep a terminal open that runs:

$ tail -f /var/log/apache2/error.log

…so I can see the second an error rolls in.

Use the error log to help you get Flask up and running on your server.

Starting your deep learning model server

Your Apache server should already be running. If not, you can start it via:

$ sudo service apache2 start

You’ll then want to start the Redis store:

$ redis-server

And in a separate terminal launch the Keras model server:

$ python run_model_server.py * Loading model... ... * Model loaded

From there try to submit an example image to your deep learning API service:

$ curl -X POST -F image=@jemma.png 'http://localhost/predict'

{

"predictions": [

{

"label": "beagle",

"probability": 0.9461532831192017

},

{

"label": "bluetick",

"probability": 0.031958963721990585

},

{

"label": "redbone",

"probability": 0.0066171870566904545

},

{

"label": "Walker_hound",

"probability": 0.003387963864952326

},

{

"label": "Greater_Swiss_Mountain_dog",

"probability": 0.0025766845792531967

}

],

"success": true

}

If everything is working, you should receive formatted JSON output back from the deep learning API model server with the class predictions + probabilities.

Stress testing your deep learning REST API

Of course, this is just an example. Let’s stress test our deep learning REST API.

Open up another terminal and execute the following command:

$ python stress_test.py [INFO] thread 3 OK [INFO] thread 0 OK [INFO] thread 1 OK ... [INFO] thread 497 OK [INFO] thread 499 OK [INFO] thread 498 OK

In your run_model_server.py output you’ll start to see the following lines logged to the terminal:

* Batch size: (4, 224, 224, 3) * Batch size: (9, 224, 224, 3) * Batch size: (9, 224, 224, 3) * Batch size: (8, 224, 224, 3) ... * Batch size: (2, 224, 224, 3) * Batch size: (10, 224, 224, 3) * Batch size: (7, 224, 224, 3)

Even with a new request coming in every 0.05 seconds our batch size never gets larger than ~10-12 images per batch.

Our model server handles the load easily without breaking a sweat and it can easily scale beyond this.

If you do overload the server (perhaps your batch size is too big and you run out of GPU memory with an error message), you should stop the server, and use the Redis CLI to clear the queue:

$ redis-cli > FLUSHALL

From there you can adjust settings in settings.py and /etc/apache2/sites-available/000-default.conf . Then you may restart the server.

For a full demo, please see the video below:

Recommendations for deploying your own deep learning models to production

One of the best pieces of advice I can give is to keep your data, in particular your Redis server, close to the GPU.

You may be tempted to spin up a giant Redis server with hundreds of gigabytes of RAM to handle multiple image queues and serve multiple GPU machines.

The problem here will be I/O latency and network overhead.

Assuming 224 x 224 x 3 images represented as float32 array, a batch size of 32 images will be ~19MB of data. This implies that for each batch request from a model server, Redis will need to pull out 19MB of data and send it to the server.

On fast switches this isn’t a big deal, but you should consider running both your model server and Redis on the same server to keep your data close to the GPU.

What's next? We recommend PyImageSearch University.

86+ total classes • 115+ hours hours of on-demand code walkthrough videos • Last updated: May 2026

★★★★★ 4.84 (128 Ratings) • 16,000+ Students Enrolled

I strongly believe that if you had the right teacher you could master computer vision and deep learning.

Do you think learning computer vision and deep learning has to be time-consuming, overwhelming, and complicated? Or has to involve complex mathematics and equations? Or requires a degree in computer science?

That’s not the case.

All you need to master computer vision and deep learning is for someone to explain things to you in simple, intuitive terms. And that’s exactly what I do. My mission is to change education and how complex Artificial Intelligence topics are taught.

If you're serious about learning computer vision, your next stop should be PyImageSearch University, the most comprehensive computer vision, deep learning, and OpenCV course online today. Here you’ll learn how to successfully and confidently apply computer vision to your work, research, and projects. Join me in computer vision mastery.

Inside PyImageSearch University you'll find:

- ✓ 86+ courses on essential computer vision, deep learning, and OpenCV topics

- ✓ 86 Certificates of Completion

- ✓ 115+ hours hours of on-demand video

- ✓ Brand new courses released regularly, ensuring you can keep up with state-of-the-art techniques

- ✓ Pre-configured Jupyter Notebooks in Google Colab

- ✓ Run all code examples in your web browser — works on Windows, macOS, and Linux (no dev environment configuration required!)

- ✓ Access to centralized code repos for all 540+ tutorials on PyImageSearch

- ✓ Easy one-click downloads for code, datasets, pre-trained models, etc.

- ✓ Access on mobile, laptop, desktop, etc.

Summary

In today’s blog post we learned how to deploy a deep learning model to production using Keras, Redis, Flask, and Apache.

Most of the tools we used here are interchangeable. You could swap in TensorFlow or PyTorch for Keras. Django could be used instead of Flask. Nginx could be swapped in for Apache.

The only tool I would not recommend swapping out is Redis. Redis is arguably the best solution for in-memory data stores. Unless you have a specific reason to not use Redis, I would suggest utilizing Redis for your queuing operations.

Finally, we stress tested our deep learning REST API.

We submitted a total of 500 requests for image classification to our server with 0.05 second delays in between each — our server was not phased (the batch size for the CNN was never more than ~37% full).

Furthermore, this method is easily scalable to additional servers. If you place these servers behind a load balancer you can easily scale this method further.

I hope you enjoyed today’s blog post!

To be notified when future blog posts are published on PyImageSearch, be sure to enter your email address in the form below!

Download the Source Code and FREE 17-page Resource Guide

Enter your email address below to get a .zip of the code and a FREE 17-page Resource Guide on Computer Vision, OpenCV, and Deep Learning. Inside you'll find my hand-picked tutorials, books, courses, and libraries to help you master CV and DL!

Hi Adrian,

Thanks for the post sharing a end-to-end workflow of shipping an app utilizing Deep Learning.

I have built a app recognizing cats using Flask, TensorFlow, CNN in similar way months ago but I decided to built another one by following your post to practice again. Thanks for the material again 😀

Let me put the link of the app for anyone want further learning:

https://github.com/leemengtaiwan/cat-recognition-app

Great job, thanks for sharing! 🙂

Great article Adrian, as always. I am a flask+ apache guy myself but I found out that flask works better with nginx+gunicorn. You can give it a try if you have the time and maybe benchmark those two to see which fits better fro production environments

Great suggestion, thanks Anastasios. I don’t think I’ll have the time to benchmark with nginx + gunicorn, but if any other readers would like to try and post the results in the comments that would be great!

Hi Adrian,

Thank you for the post ! Helped a lot !

I replaced Resnet50 with InceptionV3 as I did not have good internet to download the Resnet weights then. Also changed the image size to 299. One thing I noticed is I got wrong prediction results when I execute the curl command to test the api. So, I checked by reading the image from file system in the function classify_process() itself, which gave correct results. My image size is about 409*560*3 and format is PNG. Something is getting changed in the image data when the image is serialized and encoded or decoded ?

Can you help me with this ?

Hey Prakruti — I’m not sure why you would receive different predictions when using cURL vs. the standard Python script. The actual image dimensions should not matter as they will be resized to a fixed size prior to being passed through the .predict function. Which version of Keras and Python are you using?

What’s the point of having the redis queue? If you removed it you would save the step of encoding/decoding everything into base64, which is faster.

The only reason that I can imagine is for load balancing, but you’re suggesting a load balancer at host level.

You need a queue to handle incoming images, regardless of whether you are batch processing them or not. Neural networks + GPUs are most efficient when batch processing. Sure, we need to encode/decode images but if you don’t you will have zero control over the queue size. Furthermore, you can easily run into situations where there is not enough memory on the GPU to handle all images. Queueing controls this.

What’s the point of encoding/decoding everything into base64?

Hi Adrian,

Loved all three parts. I implemented the same following your blogs, however, I am curious to know how using Kafka would scale compared to Redis. Would you be interested in shedding some light on it?

Also, I tried replacing apache with just gunicorn and the response time wasn’t great under the stress (used Jmeter) and there were many “connection failed exceptions” while running it on the local machine.

I haven’t used Apache Kafka at scale before so I cannot provide any intuitive advise. But it would make for a great blog post. I will consider this for the future but I’m not sure if/when I’ll be able to do it.

I try to set this on aws but i get “You don’t have permission to access / on this server.

Apache/2.4.18 (Ubuntu) Server at xx.xxx.xxx.xxx Port 80”. if i remove the apache configuration, then the default Apache homepage shows. Is this apache configuration need to changed?

It sounds like you may need to edit your incoming port rules for your AWS instance to include your IP address and port 80 for Apache.

Just a couple of notes for Ubuntu 14:04 that I picked up on my journey

1.

WSGIPythonHome /home/ubuntu/.virtualenvs/keras_flask/bin

should be, according to mod_wsgi documentation, in default site conf file

WSGIPythonHome /home/ubuntu/.virtualenvs/keras_flask/

2. if you run into redis memory errors you may want to run

echo ‘vm.overcommit_memory = 1’ >> /etc/sysctl.conf

sysctl vm.overcommit_memory=1

Otherwise..great tutorial !!

Thanks for sharing, Andrew.

i am trying to deploy the model on google cloud. my problem is that i don’t want to run the **run_model_server.py** file manually as my plan is to deploy the whole thins with model as a service. how can i avoid running the file manullay and seeing the prediction on the browser instead of terminal?

You can create a cronjob (or modify the init boot) that automatically launches run_model_server.py on boot.

As for displaying the predictions in the browser you will need to modify the code to include a custom route that will interface with “predict” and return the results. If you’re new to Flask and web development, that’s okay, but you’ll want to do your research first and teach yourself the fundamentals of Flask.

I’m getting this error after restarting apache2 and acessing the server

Fatal Python error: Py_Initialize: Unable to get the locale encoding

ModuleNotFoundError: No module named ‘encodings’

I’m trying to figure what what might be causing it but with no success.

I’m getting the same error on ubuntu 18.04

The below change worked for me in Ubuntu 18.04 and Python 3.6

In the file /etc/apache2/sites-available/000-default.conf, change the line

WSGIPythonHome /home/ubuntu/.virtualenvs/keras_flask/bin

to

WSGIPythonHome /home/ubuntu/.virtualenvs/keras_flask

Hope that helps.

For the ones using only CPUs I would suggest to try multiprocessing, celery is a good option.

I’m doing this as exactly for sentiment analysis. My batch size never exceeds 1, Why is it so?

It sounds like your model is running very fast and there are infrequent requests to the server, therefore the batch size is only 1.

Thank You Adrian! Even doing the stress testing my batch size doesn’t exceeded 1 I even sent a request by having less interval time, Sometimes I got broken pipe error.

I’ve three questions

1.Is polling the redis server frequently a good practice?

2.Is it okay to implement the same in production?

3.Any other way to do asynchronously?

1. Yes, but you’ll need to determine what “frequent” means in this context.

2. Yes, you can do the same in production.

3. What part are you trying to make asynchronous?

Thank you for your sharing, which has helped me a lot!

But I still have some questions.

If I have two or more models that need to be deployed, either sklearn or Tensorflow or keras, assuming both are small models, is it feasible to modify them based on your solution?

I mean several models deployed together.

Or what do others do when they encounter several models that need to be deployed?

If you are planning on deploying multiple models I would suggest creating an endpoint/unique URL for each of them, that way you can request each moel individually.

Thanks a lot Adrian for this blog post. it helped me a lot. However I’m curious about how to use Docker with the above infrastructure. And second question is about scalability. Will it be able to scale for millions of requests per minute by increasing the compute power. If not what would be the best way to scale the inference for millions of requests per minute. It would be nice if you could cover that topic, since there are no proper tutorials/blogs posts which discusses these topics in detail especially for beginners. Thank you.

You can use Docker if you wish. Docker is just a container and could easily be used.

As far as scalability goes, that is so incredibly dependent on the environment your systems live in. If you are using the cloud you’ll want to look into on-demand scalability, automatically spinning up new instances, etc. Exactly how you do that is dependent on whether you’re in the Azure, AWS, IBM, etc. cloud.

Hi Adrian,

I have a question regarding the use of more than one model in the web server. My idea is to have several models (although they have the same description and shapes), for different entities.

My solution is currently loading the model each time it is necessary, but scaling this would make it too heavy and i guess impossible to work properly.

Do you think the best approach here would be to have one thread for each model? This way we would avoid the loadings, and each thread might handle predictions and training if needed.

Have you come across a similar problem/idea? Do you know any other ways to solve this?

BTW, thanks for your posts, I keep learning more with each one.

You might want to test using threads versus multiprocessing. Threads are normally used for I/O tasks while processes are used for CPU heavy computation. You’re on the right track but definitely test both.

Hey Adrian. Amazing post. I wanted to know if a similar architecture can be used for real-time object detection from a bunch of IP camera streams?

Thanks for the three part series!

I have a django app which uses a keras trained model, and I want it to be able to handle multiple requests as in this tutorial.

Currently it is hosted PythonAnywhere, but I don’t think that’s compatible with Redis.

Do you recommend hosting it on AWS?

Thanks!

Nick

I don’t have much familiarity with PythonAnywhere so I can’t comment there.

My favorite hosts are AWS, Azure, Linode, and DigitalOcean.

Great thanks. I followed your instruction and it works well. We are now able to scale to a net of nodes with many queues / GPUs and many GPUs at once. Tuning the parameters of Apache/WGSI is critical though. One thing we have not done is to scale the Redis itself …

Thank you.

Great job, Steve!

Hi, thanks for the great post!

It is a kind of wired that when I run the stress test from localhost, there is nothing happens at all. But when I run from external ip address and request the EC2 server. It keeps raising ConnectionError with ” Max retries exeeded with url. …… [Errno60] Operation timed out’.

Have you tried with external ip address requests EC2? Is that the problem with EC2 or something wrong with my own code here.

Thank you.

You’re having an issue connecting to EC2? If so, make sure your IP address is exposed and you can access your EC2 machine.

Hi Adrian,

Thank you for this mega step to bring the demo to production.

I read through this tutorial. In section “Sym-link CUDA libraries (optional, GPU only)”, you proposed to link the cuda library dir to the user lib.

But I think that in this tutorial, inferencing functions are moved to run_model_server.py, which runs in a seperate process other than Apache. So Apache should have no “CUDA” work to carry out. Is it necessary to take this sym-link step anyway ?

It was for me when I created the tutorial (otherwise the script would error out). Other solutions may exist.

Hi Adrian, thanks for the great post!

I’ve written up a blog post to show how to dockerize this setup and to get everything up and running within minutes.

https://medium.com/@shane.soh/deploy-machine-learning-models-with-keras-fastapi-redis-and-docker-4940df614ece

The accompanying git repo can be found here: https://github.com/shanesoh/deploy-ml-fastapi-redis-docker

I’m also currently writing a follow-up tutorial to use Swarm to quickly scale the service across multiple hosts.

Do check it out! Thanks!

This is really awesome, thanks so much for sharing Shane!

Hi Adrian,

Thank you for this very useful post!

I am just wondering how you came up with this kind of architecture? Can you recommend me some resources to learn more about best practices of deploying neural network model in production environment?

Thanks once again!

What specific production environment? Are you deploying it on your own or using other services like Google, Amazon, Azure, etc.?

Hi Adrian,

Thank you for this post.

I tested the solution with my own UNet-like model taking an image 256x256x1 as input and outputing a 256x256x1 image.

I’m running it on a NVIDIA RTX 2080 Ti with 11 Gb. I have a lot of RAM (>64Gb) and an i9 processor.

I’m wondering what performance should I expect in images/second ?

I stress tested it with 6 thousands images and it looks like the size of Redis batches are getting smaller and smaller.

( I played around with settings batch size and server/client sleep). I’ve not been able to identify the bottleneck yet.

Any idea ?

Regards