In this post, I interview Maria Rosvold and Shriya Nama, two high schoolers studying computer vision and robotics. Together, they are competing in in the 2020 RoboMed competition, part of the annual Robofest competition, a robotics festival designed to promote and support STEM and computer science education in grades 5-12.

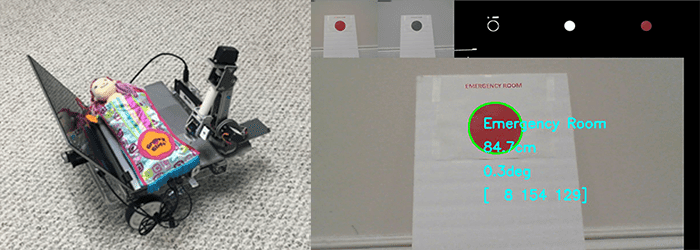

Their project submission, RoboBed, is a prototype of a self-driving gurney with a robotic arm. The idea behind RoboBed is to create a platform that can help the medical industry by not only improving mobility of patients, but also facilitating telemedicine.

Even more amazing, Maria and Shriya developed their project submission using predominately PyImageSearch tutorials and blog posts!

I am so incredibly proud of these two young women — not only are they actively involved in computer science at such a young age, but they are an inspiration to young girls everywhere.

Computer science is not a “guys only” club and we should be doing everything we can to:

- Empower young women everywhere studying STEM

- Close the gender gap in technology

- Change what the “image” of a programmer is

Let’s all give a warm welcome to Maria and Shriya as they share their amazing project submission!

An interview with Maria Rosvold and Shriya Nama, high schoolers studying robotics and computer vision

Adrian: Hi Maria and Shriya! Thank you for doing this interview. It’s such a wonderful pleasure to have you here on the PyImageSearch blog.

Maria: Thank you for having us. We’ve really enjoyed learning from your weekly blog posts.

Adrian: Before we get started, can you tell us a bit more about yourselves? What grade are you in and what are you currently studying?

Shriya: Currently I’m a sophomore at Seaholm high school in Birmingham, Michigan and Maria’s a freshman at the International Academy in Bloomfield Hills, Michigan. We have been studying programming and robotics and doing research into bio-medical applications through our robotics team. We’ve been blessed to be part of RoboFest, having presented our robotic cookie frosting machine to IEEE in 5th grade and presented our game robot to IBM at their opening ceremony in Detroit last year.

Adrian: How did you first become interested in computer vision and OpenCV?

Maria: Two years ago, we developed a robotic aquaponic system. We wanted to detect disease in plants. We knew this would be possible with computer vision, but it was beyond what we could do at the time. The founder of Robofest, Dr. CJ Chung, has a workshop to support the Vision Centric Challenge that Lawrence Tech hosts each year.

The workshop introduced me to the basics of OpenCV and the blog really took it from there. Dr. Chung said he learns a lot of deep learning and vision algorithms from your blog.

Adrian: You both created a project called “RoboBed”, a device to help patients with mobility and telemedicine — can you tell us about that project?

Shriya: RoboBed is a self-driving bed or gurney that also has a robotic arm. The idea of RoboBed is to create a platform to help the medical industry by improving mobility in two ways. First is to provide mobility to the patient at home, the elderly care facility or at the hospital. The second, and more important, is to enable full-featured telemedicine.

Adrian: What is full-featured telemedicine?

Maria: Typical telemedicine gets you a face-to-face with the doctor. Full-featured telemedicine allows an actual examination to be performed. For this we added a robotic arm. The arm will have interchangeable tools and haptic feedback and would be able to connect a doctor or specialist to a patient without any travel. Some expensive attachments would not be available at home but would be available at an elderly care facility or doctor’s office where the usage can easily justify the cost.

Adrian: Can you give me an example?

Maria: Sure, this February I had some abdominal pain and went to my doctor’s office. He couldn’t tell if I had an appendix problem and didn’t have the ability to give me an ultrasound in the office, so he sent me to the ER. After a 10 minute ultrasound they determined I was fine, but the bill was $7,000. If my doctor’s office had a robotic arm and a simple $6,900 ultrasound attachment a technician could perform the ultrasound remotely with great cost and time savings.

Adrian: Is there more to the concept than just connecting to a specialist remotely?

Shriya: Yes, that’s the beauty of the concept. Remember an expert is operating the arm and we are collecting data all the time. Think of the car company Tesla. They collect data when their customers drive. Tesla is able to learn from this data and will be able to have full autonomous driving soon. The RoboBed system will take the same approach. We collect data from the technicians and should be able to learn. At first the arm would assist the specialist to be able to perform their job better and better but eventually it will be able to perform basic evaluations without any intervention.

When RoboBed reaches full autonomy medical quality and availability skyrockets as medical costs drop. It will really benefit remote locations and poor countries that can’t afford traditional health care.

Adrian: RoboBed was part of a submission to the 2020 RoboMed competition. What is the RoboMed competition and what motivated you both to work together and submit a project to the competition?

Maria: RoboMed is an exhibition style competition introduced this year by Lawrence Technological University through their RoboFest program. They encourage middle school through college students to learn STEM and present robotics related projects. Teams compete internationally, I think they have participation from over 25 countries. RoboFest encourages the use of computer vision.

I’m interested in biomedical engineering and my experience at the emergency room really got me thinking about a better solution.

Adrian: What is the end goal of RoboBed? How practical is it in real-world applications?

Shriya: Our demo was just a proof of concept. There is a lot more that needs to be done but we believe that every feature of the system is technologically feasible. Of course the internet connection to the arm needs to be perfect and you wouldn’t be doing an invasive surgery but the whole concept of machine learning and advanced telemedicine is very feasible and we expect something that resembles this concept to be mainstream within 10 years, hopefully sooner.

Adrian: You both coded the project, which is no easy undertaking, even for advanced computer vision practitioners. Can you tell us about the coding process and the structure of the code?

Shriya: This project was different as we didn’t know the algorithms to apply. We needed to learn some basics of Python which we did through YouTube. We coded lots of little projects and then put it all together into a final application, then tested a lot. We tried to keep the code separated into modules.

Maria: From an image processing standpoint we had to select a camera, capture an image, convert the image to grayscale, blur the image and find contours. Then the trick was to determine shape, distance, and color. Then calculate color distance in HSV space to determine a match.

Adrian: What resources did you use when developing the RoboBed project?

Maria: After the workshop our coach recommended that we look at the tutorials on PyImageSearch. We started with the basic introduction to computer vision. That covered most of what we saw at Lawrence Tech: how to open an image, manipulate it, add text, lines and boxes. From there we searched for other blogs. One helped identify shapes and another one gave us some ideas on using a camera to determine distances.

Adrian: What was the biggest challenge developing RoboBed?

Shriya: Some of the challenges were not what we expected. We thought that detecting colors would be easy. Red is red and yellow is yellow, right? Well, you see your eyes are amazing, the computer just sees a bunch of numbers and doesn’t really know the color. When the lighting conditions change the red, green and blue values shift and sometimes red looks like orange to the computer. Colors changed based on lighting conditions and the camera that was used. If we had to identify rooms in the future, we would use shapes and not colors.

Another challenge was blurring of images. We thought we could drive and detect images to map the environment. As it turns out when we drive the images get blurred and it makes object detection much more difficult. Consistent lighting and a stable camera make a big difference.

Adrian: What are the next steps for the RoboBed project? Are you going to continue to develop it?

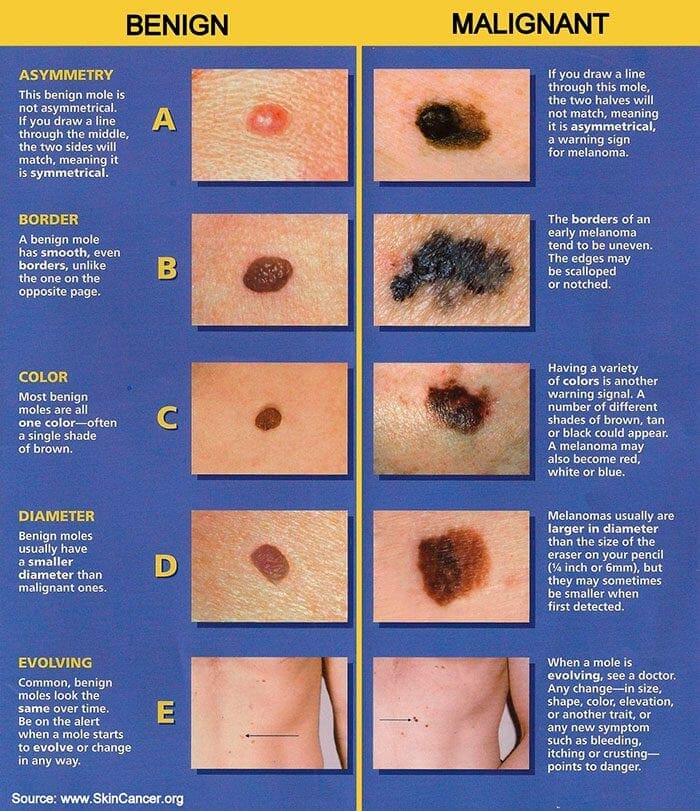

Maria: We’ve already started on our first application, a dermatology project where we hope to be able to identify melanomas early and therefore save lives. It will be a tool to help dermatologists but hope eventually it can be able to perform much of the work on its own. We just started researching the project but think it is very promising.

Adrian: How does computer vision fit that application?

Maria: Computer vision is a great fit. From www.SkinCancer.org we know a melanoma can be identified by the ABCDE rule, that is asymmetry, border irregularity, color that is non-uniform, diameter greater than 6mm and evolving. These are all things that OpenCV should be really good at.

Do you have any pointers on how to take this to the next level beyond just image processing?

Adrian: Deep learning, and more specifically, instance segmentation networks such as Mask R-CNN and U-Net work very well for image segmentation. I’ve applied Mask R-CNN with success to melanoma detection (that chapter is actually included in the ImageNet Bundle of my book, Deep Learning for Computer Vision with Python).

There’s a highly cited paper in the medical computer vision/deep learning space that I suggest all readers interested in automatic melanoma detection and classification read — the paper, entitled, Dermatologist-level classification of skin cancer with deep neural networks, was published in June 2017 and has nearly 5,000 citations.

Adrian: Would you recommend PyImageSearch to other students who are trying to learn computer vision and OpenCV?

Shriya: Absolutely. PyImageSearch is a great resource for learning basic image processing operations. We know you go in depth on image classification, deep learning and optical character recognition. We hope to be able to learn new skills there. We’ll definitely be using your blog to research how to identify the skin melanomas.

Adrian: If a PyImageSearch reader wants to contribute ideas or learn more about the project or Robofest, what would you recommend?

Maria: It would be great to connect with your readers or anyone interested in a biomedical project. We’d love to find a dermatologist who is interested in computer vision and could help with testing and evaluation.

We have a YouTube channel where you can see our RoboBed demonstration and website, SmartLabRototics.

The best way to contact us is at our team’s email account, smartlabsrobotics [at] gmail [dot] com. We’d also be happy to provide any advice for someone getting started in robotics and perhaps wanting to compete in the Robofest competitions.

Thanks so much for having us.

Summary

In today’s blog post, we interviewed Maria Rosvold and Shriya Nama, two high schoolers studying computer science, robotics, and computer vision.

Maria and Shriya have submitted their project, RoboBed, to the RoboMed competition which is part of the annual Robofest competition. Their prototype self-driving gurney, equipped with a robotic arm, improves patient mobility can even be used to facilitate telemedicine.

Truly, Maria and Shriya are an inspiration. I am incredibly proud of their work.

If you’d like to follow in the footsteps of Maria and Shriya, I suggest you take a look at my books and courses. You’ll be getting a great education and will be able to successfully design, develop, and implement computer vision/deep learning projects of your own.

Join the PyImageSearch Newsletter and Grab My FREE 17-page Resource Guide PDF

Enter your email address below to join the PyImageSearch Newsletter and download my FREE 17-page Resource Guide PDF on Computer Vision, OpenCV, and Deep Learning.

Comment section

Hey, Adrian Rosebrock here, author and creator of PyImageSearch. While I love hearing from readers, a couple years ago I made the tough decision to no longer offer 1:1 help over blog post comments.

At the time I was receiving 200+ emails per day and another 100+ blog post comments. I simply did not have the time to moderate and respond to them all, and the sheer volume of requests was taking a toll on me.

Instead, my goal is to do the most good for the computer vision, deep learning, and OpenCV community at large by focusing my time on authoring high-quality blog posts, tutorials, and books/courses.

If you need help learning computer vision and deep learning, I suggest you refer to my full catalog of books and courses — they have helped tens of thousands of developers, students, and researchers just like yourself learn Computer Vision, Deep Learning, and OpenCV.

Click here to browse my full catalog.