In this post, I interview Jagadish Mahendran, senior Computer Vision/Artificial Intelligence (AI) engineer who recently won 1st place in the OpenCV Spatial AI Competition using the new OpenCV AI Kit (OAK).

Jagadish’s winning project was a computer vision system for the visually impaired, allowing users to successfully and safely navigate the world. His project included:

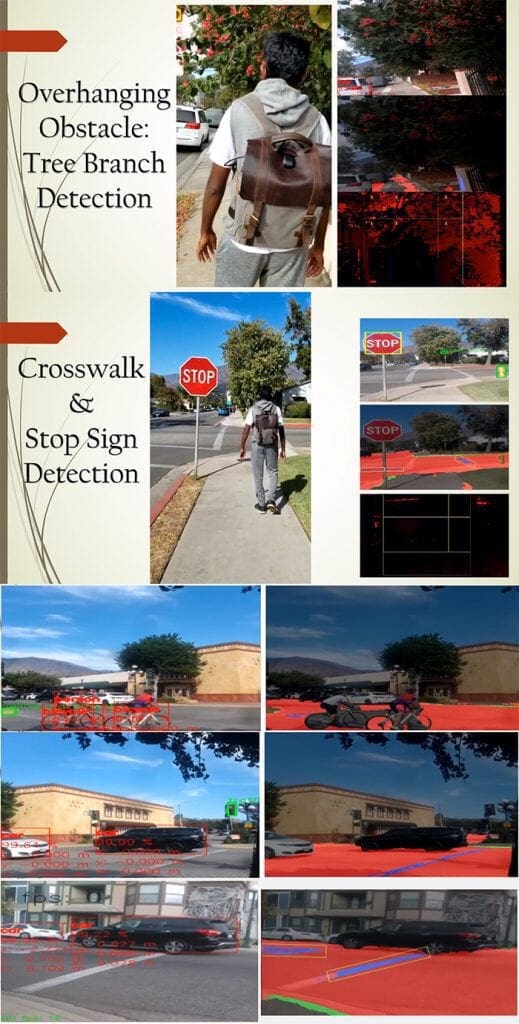

- Automatic detection of crosswalks

- Crosswalk and stop sign detection

- Overhanging obstacle detection

- …and more!

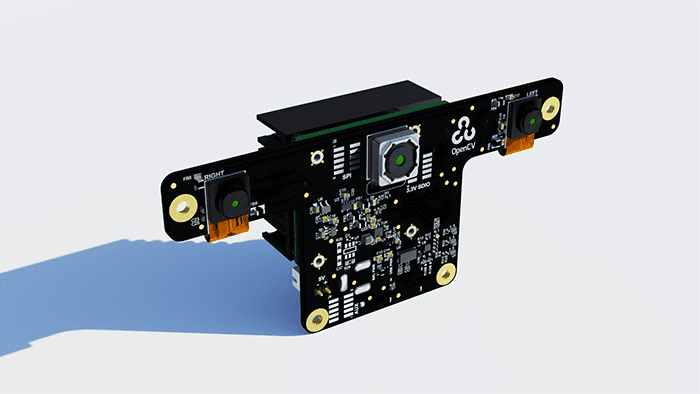

Best of all, this entire project was built around the new OpenCV Artificial Intelligence Kit (OAK), an embedded device designed specifically for computer vision.

Join me in learning about Jagadish’s project and how he uses computer vision to help visually impaired people.

An interview with Jagadish Mahendran, 1st place winner of the OpenCV Spatial AI Competition

Adrian: Welcome, Jagadish! Thank you so much for being here. It’s a pleasure to have you on the PyImageSearch blog.

Jagadish: Pleasure to be interviewed by you, Adrian. Thanks for having me.

Adrian: Before we get started, can you tell us a bit about yourself? Where do you work, and what is your role there?

Jagadish: I am a senior Computer Vision / Artificial Intelligence (AI) engineer. I have worked for multiple startups, where I have built AI and perception solutions for inventory management robots and cooking robots.

Adrian: How did you first become interested in computer vision and robotics?

Jagadish: I have been interested in AI since my undergraduate studies, where I had an opportunity to build a micromouse robot with my friends. I got attracted to computer vision and machine learning during my Master’s. Since then, it has been great fun working with these amazing technologies.

Adrian: You recently won 1st place in the OpenCV Spatial AI Competition, congratulations! Can you give us more details on the competition? How many teams participated, and what was the end goal of the contest?

Jagadish: Thank you. The OpenCV Spatial AI 2020 Competition sponsored by Intel involved 2 phases. Around 235 teams with various backgrounds, including university labs and companies, participated in Phase 1, which involved proposing an idea that solves a real-world problem using an OpenCV AI Kit with Depth (OAK-D) sensor. Thirty-one teams were selected for Phase 2, where we had to implement our ideas for 3 months. The end goal is to develop a fully functioning AI system using an OAK-D sensor.

Adrian: Your winning solution was a vision system for the visually impaired. Can you tell us more about your project?

Jagadish: There are various visual assistance systems available in the literature and even in the market. Most of them don’t use deep learning methods due to hardware limitations, cost, and other challenges. But recently, there has been significant improvement in edge AI and sensor space which I thought could provide deep learning support to the visual assistance system with limited hardware.

I developed a wearable visual assistance system that uses an OAK-D sensor for perception, external neural compute sticks (NCS2), and my 5-year-old laptop for computing. The system can perform various computer vision tasks that can help visually impaired people with scene understanding.

These tasks include: detecting obstacles; elevation changes; and understanding road, sidewalk, and traffic conditions.

The system can detect traffic signs along with many other classes like people, cars, bicycles, and so on. The system can also detect obstacles using point clouds and update the individual regarding their presence using a voice interface. The individual can also interact with the system using a speech recognition system.

Here are a few sample outputs:

Adrian: Tell us about the hardware used to develop your project submission. Does the individual need to wear a lot of bulky hardware and devices?

Jagadish: I interviewed a few visually impaired people and learned that getting too much attention while walking on the streets is one of the major issues faced by the visually impaired. So the physical system not being noticeable as an assistive device was a major goal. The developed system is simple — the physical setup includes my 5-year-old laptop, 2 neural compute sticks, camera hidden inside a cotton vest, GPS, and if needed, an additional camera can be placed inside a fanny pack/waist bag. Most of these devices are nicely packaged inside a backpack. Overall it looks like a college student walking around wearing a vest. I have walked around my downtown area, drawing absolutely no special attention.

Adrian: Why did you choose the OpenCV AI Kit (OAK), and more specifically, the OAK-D module that can compute depth information?

Jagadish: The organizers provided OAK-D as part of the competition, and it has many benefits. It is small. Along with RGB images, it can also provide depth images. These depth images have been very useful to detect obstacles even without knowing what obstacle it is. Also, it has an on-chip AI processor, which means computer vision tasks are already performed before the frames reach the host. This makes the system superfast.

Adrian: Do you have any demos of your vision system for visual impairment in action?

Jagadish: Demos can be found here:

Adrian: I noticed that your system has GPS as well as vision components. Why was it necessary to include GPS?

Jagadish: I wanted the system to be comprehensive, reliable, and also expandable. So I tried to include as many supplemental features as possible. GPS has been a great addition in that regard. It is a mature technology that is cheap and easy to set up. It can help with localization without the need for advanced robotics navigation and perception algorithms. I also wanted the GPS feature to be used differently from the usual map services. So I added a feature to save preferred locations, like a friend’s place, the gym, or a grocery store using custom names. The user can request the system for distances to these saved locations utilizing a voice recognition system. Also, the GPS location can be shared with preferred contacts via SMS.

Adrian: What was the biggest challenge developing the vision system for the visually impaired?

Jagadish: From a developer’s perspective, it is a complex system that involves both hardware and AI components. There was a lot of trial and error involved in choosing the harness for the sensors. Dataset collection and testing process required hours of walking in different areas of the town and at various times of the day. For deep learning, the biggest challenge was choosing models that are lighter with high accuracy and getting all these models working together in real-time on limited hardware.

Adrian: If you had to pick the most important technique you applied when developing the project, what would it be?

Jagadish: Model quantization techniques can provide a huge boost to inference speed with acceptable compromise in accuracy. OpenVINO optimizations were able to boost inference speed sometimes by up to 13x. Lighter models can learn faster/better from smaller images. For example, the MobileNetV2 object detection model trained on 300×300 images performed better than 450×450 images.

Adrian: What are the next steps for the project? Will you continue to develop it?

Jagadish: The next immediate step I am currently working on is getting the project tested by my visually impaired friend. I will ship the system to her soon. Also, there are numerous new features in the pipeline to be added. I am also working on making the project open source and mainstream. The idea is anyone should be able to use the complete AI stack for free, provided they can buy the sensors and computing unit on their own. I am also trying to build a developer’s community. So far, I have received some positive responses on this. Hopefully, the project becomes self-maintained in the future. We are also planning to obtain some funds for project expansion. I hope the project will make life easier for the visually impaired and increase their engagement in daily activities.

Adrian: What are your computer vision and deep learning tools, libraries, and packages of choice?

Jagadish: OpenCV for image manipulations and operations. Tensorflow, Keras, and PyTorch were used for deep learning. For edge AI – OpenVINO, TensorflowLite. DepthAI for OAK-D. Open3D for point cloud processing. Vosk for speech recognition, Festival for text-to-speech. Apart from them, standard python packages such as numpy, pandas, sklearn, etc., were used.

Adrian: What advice would you give to someone who wants to follow in your footsteps but doesn’t know how to get started?

Jagadish: AI can be deceiving. It is easy to get started and quickly gain a sense of mastery. However, this is not necessarily true. We are usually only touching the tip of the iceberg. There is a lot more going on in the background, even with a simple sigmoid activation function. It helps to learn systematically from the basics, solving practical and diverse problems, rather than just reading. Also, the AI community is very active and continuously evolving. It helps to read papers.

Adrian: You’ve been a PyImageSearch reader and customer since 2016! Thank you for supporting PyImageSearch and me. What PyImageSearch books and courses do you own? And how did they help prepare you for this competition?

Jagadish: I have been a PyImageSearch reader since my student life. I follow your blog posts regularly. I own the Practical Python and OpenCV book, complete ImageNet Bundle of Deep Learning for Computer Vision with Python, Complete Bundle of Raspberry Pi for Computer Vision. I will be grabbing the OCR bundle at some point. I have also completed the PyImageSearch Gurus course. I am currently trying out PyImageSearch University.

The PyImageSearch content, in general, has helped me with my professional career. In the competition, techniques from blogs and course materials were used to train lighter models to obtain faster and more accurate models. For example, the TrafficSignNet model from the traffic sign classification blog was used to classify images with traffic signs and other classes. MiniVGGNet from the deep learning bundle was trained to detect elevation changes from depth images.

Congratulations and thanks for making such quality content, Adrian.

Adrian: Would you recommend these books and courses to other budding developers, students, and researchers trying to learn computer vision, deep learning, and OpenCV?

Jagadish: Yes, absolutely.

Adrian: If a PyImageSearch reader wants to chat about your project, what is the best place to connect with you?

Jagadish: Happy to connect on LinkedIn — https://www.linkedin.com/in/jagadish-mahendran/

Summary

In this blog post, we interviewed Jagadish Mahendran, who won 1st place in the OpenCV Spatial AI Competition.

Jagadish is doing amazing work that can help visually impaired people — and doing so using hardware that makes computer vision and deep learning easy to apply.

I’m excited to follow Jagadish’s work, and I wish him the best of luck continuing to develop it.

To be notified when future tutorials and interviews are published here on PyImageSearch, simply enter your email address in the form below!

Join the PyImageSearch Newsletter and Grab My FREE 17-page Resource Guide PDF

Enter your email address below to join the PyImageSearch Newsletter and download my FREE 17-page Resource Guide PDF on Computer Vision, OpenCV, and Deep Learning.

Comment section

Hey, Adrian Rosebrock here, author and creator of PyImageSearch. While I love hearing from readers, a couple years ago I made the tough decision to no longer offer 1:1 help over blog post comments.

At the time I was receiving 200+ emails per day and another 100+ blog post comments. I simply did not have the time to moderate and respond to them all, and the sheer volume of requests was taking a toll on me.

Instead, my goal is to do the most good for the computer vision, deep learning, and OpenCV community at large by focusing my time on authoring high-quality blog posts, tutorials, and books/courses.

If you need help learning computer vision and deep learning, I suggest you refer to my full catalog of books and courses — they have helped tens of thousands of developers, students, and researchers just like yourself learn Computer Vision, Deep Learning, and OpenCV.

Click here to browse my full catalog.