In this blog post, I interview Askat Kuzdeuov, a computer vision and deep learning researcher at the Institute of Smart Systems and Artificial Intelligence (ISSAI).

Askat is not only a stellar researcher, but he’s an avid PyImageSearch reader as well.

I was first introduced to Askat a couple weeks ago. We had just announced a $500 prize to the first PyImageSearch University member to complete all courses in the program. Less than 24 hours later, Askat had completed all courses and won the prize.

We started an email conversation from there and he shared some of his latest research. I was incredibly impressed to say the least.

Askat and his colleagues’ latest work, SpeakingFaces: A Large-Scale Multimodal Dataset of Voice Commands with Visual and Thermal Video Streams, introduces a new dataset that computer vision and deep learning researchers/practitioners can use for:

- Human–computer interaction

- Biometric authentication

- Recognition systems

- Domain transfer

- Speech recognition

What I really like about SpeakingFaces is the sensor fusion component, consisting of:

- High-resolution thermal images

- Visual spectra image streams (i.e., what the human eye can see)

- Synchronized audio recordings

Data was collected from 142 subjects, yielding over 13,000 instances of synchronized data (∼3.8 TB) … but collecting the data was just the easy part!

The hard part came afterward — preprocessing all the data, ensuring it was synchronized, and packaging it in a way that computer vision/deep learning researchers could use in their own work.

From there, Askat and his colleagues trained a GAN to take the input thermal images and then generate an RGB image from them, which as Askat can attest, was no easy task!

Inside the rest of this interview, you will learn how Askat and his colleagues built the SpeakingFaces dataset, including the preprocessing techniques they used to clean and prepare the dataset for distribution.

You’ll also learn how they trained a GAN to generate RGB images from thermal camera inputs.

If you have any interest in learning about how to perform publication-worthy work in the computer vision community, then definitely make sure you read this interview!

An interview with Askat Kuzdeuov, computer vision and deep learning researcher

Adrian: Hi Askat! Thank you for taking the time to do this interview. It’s a pleasure to have you on the PyImageSearch blog.

Askat: Hi Adrian! Thank you for having me. It’s a great honor to be a guest on the PyImageSearch.

Adrian: Before we get started, can you tell us a bit about yourself? Where do you work, and what is your role?

Askat: I am a data scientist at the Institute of Smart Systems and Artificial Intelligence (ISSAI). My main role is to conduct research related to computer vision, deep learning, and mathematical modeling.

Adrian: I really enjoyed going through your Google Scholar profile and reading some of your publications. What got you interested in doing research in computer vision and deep learning?

Askat: Thank you! I am glad to hear you liked them! I discovered computer vision during my graduate studies when I took an image processing course, on the recommendation of my advisor, Dr. Varol. It quickly became my favourite subject. I had a lot of fun operating with images in a game format, and by the end of the course I realized it is exactly what I want to pursue.

Adrian: Your most recent publication, SpeakingFaces: A Large-Scale Multimodal Dataset of Voice Commands with Visual and Thermal Video Streams, introduces a dataset that computer vision practitioners can use to better understand and interpret voice commands through visual cues. Can you tell us a bit more about this dataset and associated paper? What made you and your team want to create this dataset?

Askat: Two of the major research interests of our Institute are multisensory data and speech data. The thermal camera was one of the sensors that we experimented with, and we were interested in examining how it might be used to accompany voice recordings. When we began the research, we realized that there are no existing datasets suitable to the task, so we decided to design one. Since most works primarily associate audio with visual data, we opted to combine all three modalities to widen the range of potential of applications.

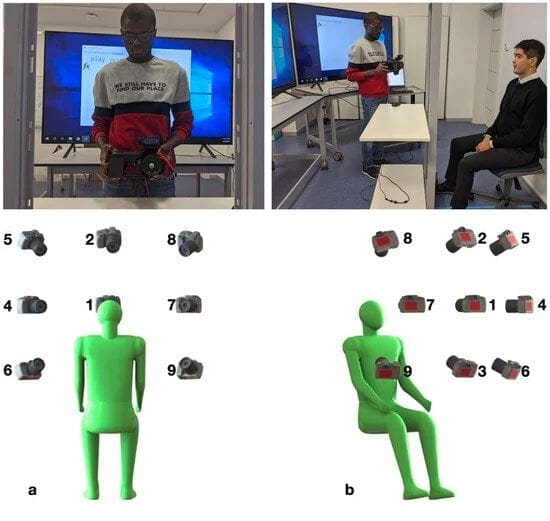

Adrian: Can you describe the dataset acquisition process? I’m sure that was quite the undertaking. How did you accomplish it so efficiently?

Askat: It was indeed an undertaking. Once we designed the acquisition protocol, we had to go through an ethics committee to prove that our study bears minimal or no risk to our potential participants. We were able to proceed only after their approval. Because of the great number of participants and their busy schedules, it was quite tricky to schedule recording sessions. We asked each participant to donate 30 minutes of their time and come back to repeat the whole shooting process on another day. The population of participants was another challenge, especially so under pandemic conditions. We aimed for a diverse and gender balanced representation, so we had to keep that in mind while scouting for potential participants.

As with many people across the globe, we unexpectedly got hit by the pandemic and had to adjust our plans, and shift towards data processing tasks that could be performed remotely, optimizing online collaboration.

I am thankful to my co-authors and other ISSAI members who helped with the dataset. In the end, as they say, it took a village to acquire and process the data!

Adrian: After gathering the raw data you would have needed to preprocess it and then organize it into a logical structure that other researchers could utilize. Working with multi-modal data makes that a non-trivial process. How did you solve the problem?

Askat: Yes, the preprocessing step had its own challenges, as it revealed some problems that occurred during the data collection process. In fact, we found your tutorials to be instrumental in resolving these issues and cited them in our paper.

For instance, visual images were blurred in some cases because of the autofocus mode in the web camera. Considering that we recorded millions of frames, a manual search for blurred frames would have been an extremely difficult task. Fortunately, we had read your blog post “Blur Detection with OpenCV” which helped us to automate the process.

Also, we noticed that the thermal camera had frozen in some cases, such that the affected frames were not updated properly. We detected these cases by comparing consecutive frames and utilizing the Structural Similarity Index method, which was well explained in your “How-To: Python Compare Two Images” post.

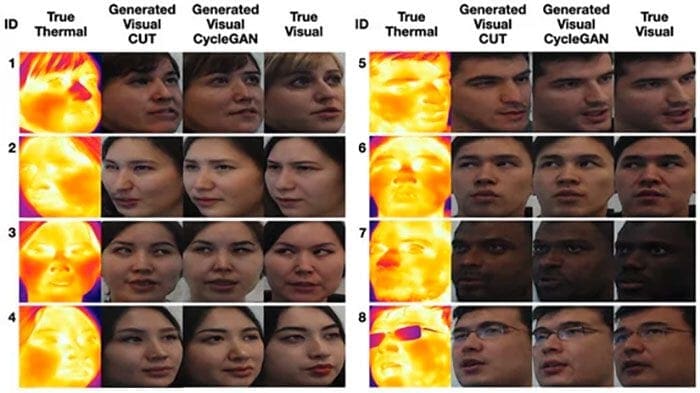

Adrian: I noticed that you used GANs to translate thermal images to “normal” RGB representations of the faces — can you tell us a bit more about that process and why you needed to use GANs here?

Askat: The state of the art of face recognition and facial landmarks prediction models were trained using only visual images. Therefore, we can’t apply them directly to thermal images. There are several possible options to solve this problem.

The first one is to collect a large amount of annotated thermal images and train the models from scratch. This process takes an enormous amount of time and human labour.

The second option is to combine visual and thermal images and use a transfer learning method. However, this method also requires annotating thermal images.

The option that we went for in the paper is to translate images from thermal to the visible domain using deep generative models. We opted for GANs because the state of the art shows that GANs are superior compared to autoencoders in generating realistic images. However, the downside is that GANs are extremely difficult to train.

Adrian: What are some of the more practical applications for which you envision using the SpeakingFaces dataset?

Askat: Overall we believe the dataset can be used for a wide range of human-computer interaction purposes. We hope our work will encourage others to integrate multimodal data into different recognition systems and make them more robust.

Adrian: What computer vision and deep learning tools, libraries, and packages did you utilize in your research?

Askat: The first step was data collection. We used a Logitech C920 Pro camera with dual mics and FLIR T540 thermal camera.

The official API for the thermal camera was written in MATLAB. Thus, I had to use MATLAB’s Computer VIsion and ROS ToolBoxes to acquire frames simultaneously from both cameras.

The next step was cleaning and preprocessing data. We mainly utilized Python and OpenCV, and heavily used your imutils package. It was very useful!

In the final step, we used PyTorch to build the baseline models for thermal-visual facial image translation and multimodal gender classification.

Adrian: If you had to pick the most challenging issue you faced when building the SpeakingFaces dataset, what would it be?

Askat: Definitely operating during the lockdown. When we shifted to the preprocessing stage, we realized that some of the collected image frames were corrupted. Unfortunately, due to quarantine measures, we couldn’t reshoot them, so we safely removed what we could and documented the rest.

Adrian: What are your next steps as a researcher? Are you going to continue working on SpeakingFaces or are you moving on to other projects?

Askat: Currently I am involved in several projects. Some of them are related to the SpeakingFaces. For instance, I am working on building a thermal-visual pose invariant face verification system based on a siamese neural network. In another project, we collected additional data using the same thermal camera but in the wild. Our goal is to build robust thermal face detection and landmark prediction models.

Adrian: What advice would you give to someone who wants to follow in your footsteps and become a successful published researcher, but doesn’t know how to get started?

Askat: I recommend finding an environment where you can work with people who share similar interests and enthusiasm. If you are passionate about the work, and you are working with interesting people, then it doesn’t really feel like a job!

Adrian: A few weeks ago we offered a $500 prize to the first member of PyImageSearch University who completed all courses, and you were the winner! Congratulations! Can you tell us about that experience? What did you learn inside PyImageSearch University and how were you able to complete all lessons so quickly?

Askat: Thank you again for the prize! It was a nice bonus to the great course.

I’ve been following the blog for some time: at the beginning of my journey into the field of computer vision, I went through most of the freely available lessons at PyImageSearch. I rewrote each piece of code, line by line. I strongly recommend this method, especially for beginners, because it not only allows you to understand the content, but also teaches you to program properly.

When you provided one week free access to the PyImageSearch University, I joined to watch the videos, because it is always good to refresh your knowledge. It was a great decision because the experiences I got from reading the blogs and watching the videos were completely different. I went through almost all topics in one week and had only 1 or 2 left. Nevertheless, I decided to purchase the annual membership because I liked the format, and also I wanted to support your hard work.

I definitely recommend PyImageSearch University for researchers and practitioners, especially in the areas of computer vision, deep learning, and OpenCV. The courses provide a strong baseline which is imperative if you wish to understand advanced concepts.

Adrian: If a PyImageSearch reader wants to connect with you, how can they do so?

Askat: If you wish to discuss some interesting ideas with me, you can contact me via Linkedin.

If you are interested in learning more about the SpeakingFaces dataset, here is a good overview video:

And here are some examples of our image translation model:

If you are interested to learn more about other active projects, we have pretty comprehensive material available on the website, and on the YouTube channel:

Summary

Today we interviewed Askat Kuzdeuov, a computer vision and deep learning researcher at the Institute of Smart Systems and Artificial Intelligence (ISSAI).

Askat’s latest work includes the SpeakingFaces dataset which can be used for human–computer interaction, biometric authentication, recognition systems, domain transfer, and speech recognition.

Perhaps one of the most notable contributions from the work is the accuracy of using GANs to generate RGB images from thermal camera inputs — an accurate GAN model would allow deep learning practitioners to reduce the number of sensors in a real-world application, potentially relying on just the thermal camera.

Make sure you give Askat’s work a read, it’s a wonderfully done and high-quality piece.

To be notified when future tutorials and interviews are published here on PyImageSearch, simply enter your email address in the form below!

Join the PyImageSearch Newsletter and Grab My FREE 17-page Resource Guide PDF

Enter your email address below to join the PyImageSearch Newsletter and download my FREE 17-page Resource Guide PDF on Computer Vision, OpenCV, and Deep Learning.

Comment section

Hey, Adrian Rosebrock here, author and creator of PyImageSearch. While I love hearing from readers, a couple years ago I made the tough decision to no longer offer 1:1 help over blog post comments.

At the time I was receiving 200+ emails per day and another 100+ blog post comments. I simply did not have the time to moderate and respond to them all, and the sheer volume of requests was taking a toll on me.

Instead, my goal is to do the most good for the computer vision, deep learning, and OpenCV community at large by focusing my time on authoring high-quality blog posts, tutorials, and books/courses.

If you need help learning computer vision and deep learning, I suggest you refer to my full catalog of books and courses — they have helped tens of thousands of developers, students, and researchers just like yourself learn Computer Vision, Deep Learning, and OpenCV.

Click here to browse my full catalog.