Imagine this:

You’ve built a brand new home out in the country, far from major cities. You need a break from all the hustle and bustle, and you want to bring yourself back to nature.

The house you’ve built is beautiful. It’s quiet at night, you can see constellations dancing in the sky, and you sleep well, knowing you’re going to wake up rested and rejuvenated.

… then, you wake up in the middle of the night. Smoke? Is there a fire?!

You run down the stairs and onto the porch. On the horizon, you see an orange glow, as if the entire sky is on fire. Smoke is billowing, like an angry storm cloud ready to suffocate you.

Sure enough, it’s a wildfire. And based on how the wind is blowing, it’s headed right for you.

Your beautiful serene home is now turned into a combustible nightmare.

And you have to wonder … could computer vision have been used to detect this wildfire early on and thereby alerted firefighters in the area?

No time to think about that now though, just grab what precious belongings you can, throw them in the back of the truck, and get the hell out of there.

As the raging fire bears down on your house, you take one last look in the rearview mirror and vow that you’ll one day figure out how to detect wildfires early on … and then you’re driving down a dirt road in search of civilization.

Scary story, right?

And while I’ve embellished it a bit for dramatic effect, it’s not unlike what David Bonn has experienced in his home out in Washington State … multiple times!

David has dedicated the past few years of his professional career to develop an early warning computer vision system to detect wildfires.

The system runs on a Raspberry Pi and connects to the internet via WiFi or a cellular modem. If a fire is detected, the RPi pings David, after which he can alert the fire department.

Additionally, the United States Patent Office just granted David multiple patents on his work!

It’s truly a pleasure to have David on the PyImageSearch blog today. He’s been a long-time reader, customer, and moderator in the community forums.

And most importantly, his work is helping prevent injury and loss of life in arguably one of the most notoriously hard natural disasters to detect early on.

To learn how David Bonn has created a computer vision system to detect wildfires, just keep reading the full interview!

An interview with David Bonn, computer vision and wildfire detection expert

Adrian: Hi David! Thank you for taking the time to do this interview. It’s a pleasure to have you on the PyImageSearch blog.

David: Thanks, Adrian. Always a pleasure to chat with you.

Adrian: Before we get started, can you tell us a bit about yourself? Where do you work, and what do you do?

David: I have been working off and on as a developer and engineer since college. In between those jobs, I had various “fun” jobs, as a Park Ranger, river guide, ski instructor, and fire lookout.

Along with a few other people, I founded WatchGuard Technologies in 1996, which became wildly successful and is, in fact, still around and independent today. After that adventure, I was semi-retired and traveled a great deal. During that same period, I spent a lot of time working on environmental education programs and other natural history projects.

These days I am trying to get a new company, Deepseek Labs, off the ground.

Adrian: What got you interested in studying computer vision and deep learning?

David: It became obvious to me about ten years ago that something big had changed with respect to neural networks and how they could work. They finally were on the path to having a toolkit that could solve practical problems. And what was even more interesting to me was that there was a large class of real problems that you likely couldn’t solve any better way.

A few years later, I started dabbling a bit with OpenCV, mostly by downloading books and going through their examples, and then I stumbled across your blog in 2015.

In 2014 and 2015, there were a series of large wildfires near my home. While my home was unscathed, almost 500 homes were lost in the combined fires, and several firefighters were killed. It occurred to me that there was a huge unsolved problem here, and I wondered what I could do to solve it.

In late 2017 I found myself in one of those situations where one needs to take stock of their life and what they were doing with it. At the same time, I had many conversations with friends and neighbors about the wildfire problem and what tools we would need to adapt to this new situation.

One thing that popped into my head during those conversations was (approximately), “gosh, couldn’t I make something that would detect fires and give people a few minutes warning to flee for their lives?” So, I resolved to learn enough about computer vision and deep learning to figure out if such a thing was even possible.

Usually, I find that if you wish to master a new skill, it helps a great deal if you have a project (or a set of goals) in mind that needs that new skill to help push you along. So my project for mastering computer vision and deep learning was to build a simple but practical fire detection system.

Adrian: One of my first memories of interacting with you was in the PyImageSearch community forums, where you were discussing fire/smoke detection and how that was such a big concern where you live. How do those wildfires start, and why is early detection so important?

David: Every fire is different. Right now, there are four substantial fires within thirty miles of my home. Three were started by lightning, and one was caused by a person working on an irrigation pump. Typically most “wildfires” in the United States happen in fairly developed areas caused by human activity. That might be anything from a power line shorting on a tree branch to a vehicle idling in dry grass to somebody carelessly cooking hot dogs on a campfire.

Early detection is very important, both from a cost and public safety perspective. A small fire might cost a few thousand dollars to suppress. A large fire can easily run into the tens of millions of dollars. The Cub Creek 2 Fire, near my home, had over 800 people fighting the fire at its peak. A small crew in a brush truck might cost $1000 per day, and a large helicopter typically runs $8000 per hour. Those costs add up quickly.

Also, while most anyone can safely put out a campfire with a shovel and bucket of water, fighting a large fire is more like fighting a weather phenomenon. You might be able to slow it down or steer it a little bit, but you aren’t likely to stop it or completely suppress it.

Chances are the fires burning near me will still be burning, somewhere, until the snow starts falling in November. But, with a lot of the brush cleared out by the fires, the skiing is likely to be great this winter!

Another big reason to emphasize early detection is that many “wildfires” start in areas that people live in. Early detection can save lives if it gives people a few minutes to evacuate safely. Approximately 4.5 million homes in the Western USA and Canada are at high or extreme risk for wildfires.

Adrian: A few weeks ago, your own home was affected by a wildfire. Can you tell us about what happened?

David: I can tell you what happened from my perspective.

An important bit of context: I live in north central Washington State, which despite Washington’s reputation, is a pretty dry place, and summers can often be quite warm. It has been an extremely dry spring (most of the eastern part of the state is in a serious drought), and we had an incredible heat wave in late June. So vegetation was incredibly dry. How dry? Well, fire researchers take core samples from trees to evaluate fuel dryness. Core samples taken in my area two weeks before the fire found that living trees were dryer than kiln-dried lumber with a moisture content of about 2%. Your typical sheet of paper has a 3% moisture content.

On Friday, July 16th, I was at home and inside during the heat of the day. About 1:45 pm, I looked outside and noticed an ominous column of smoke just to the south. At that point, I went out, and there was a pretty strong wind blowing from the direction of the smoke.

At that point, a whole lot of practice and planning kicked in. I quickly closed up all of the windows (most were already closed). There was a bag of clothes and a box of documents and hard drives in the entry that I quickly loaded into the truck. Then I got all four dogs and loaded them in the truck as well. After a long last look at the house, I headed down the hill, and at the same time, started texting my neighbors about the fire and encouraging them to get out of there.

At this point, I and all of my neighbors got very lucky. There was another large fire in the area, and they immediately transferred fire crews and aircraft to this new fire. Hence, within an hour, there were several aircraft and around 100 firefighters on the scene (by the time the fire crews were on the scene, the fire was already estimated to be 1000 acres in size).

There is also a heavy equipment operator very close by, and even before all the firefighters were there, he was cutting firelines all over the place with a bulldozer.

All that quick action by my neighbors and firefighters produced a near-miraculous result: only a few buildings were lost, and a few others had minor damage. And nobody was injured or killed.

Despite a county-wide emergency warning system, none of us had any warning at all — the warnings from the county system got to my phone about 2:30 pm. If the exact series of events had happened at 1:45 am rather than 1:45 pm, things would have been tragically worse, certainly in terms of loss of homes and property and likely in terms of lost lives as well.

Interestingly, I had a prototype fire-detection system up and running outside while this all happened. By the time it detected a fire, I was quite a distance away, of course. Unfortunately, by the time it detected a fire, WiFi was out (power went out at my house when I evacuated and wasn’t restored late the next day). As a result, I was unable to save any of the detection images. In addition, the detector itself was running on a Goal Zero battery pack.

Adrian: You and your company have developed a fire detection system that can be used in rural areas. Can you tell us about the solution? How does it work?

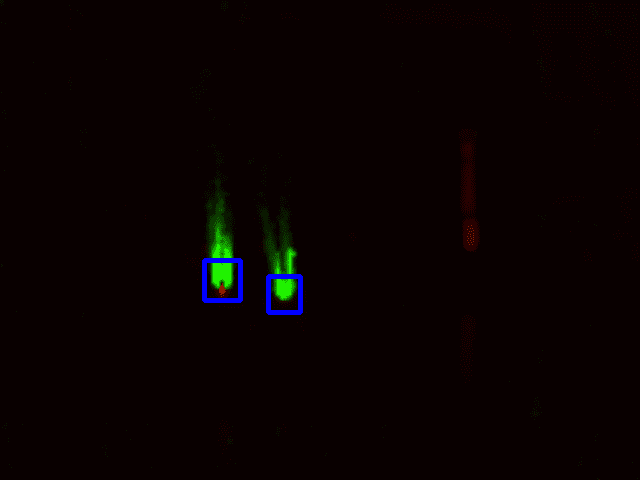

David: The 30-second answer is that I use a thermal camera (FLIR Lepton 3) and basic OpenCV image processing functions to find good candidate regions. I pass to another program that inspects those regions with an optical camera and then passes slices of the optical image to a binary classifier.

The longer answer is that the thermal camera looks for two things close together: a hot spot (which is a very bright part of the thermal image) and a region of turbulent motion. So, if I can find turbulent motion close to a very hot spot and the turbulent motion is mostly above the hot spot (remember that hot air rises), the area where the hot spot and turbulent motion overlap (or where they overlap if suitably dilated) is a likely location to find flames.

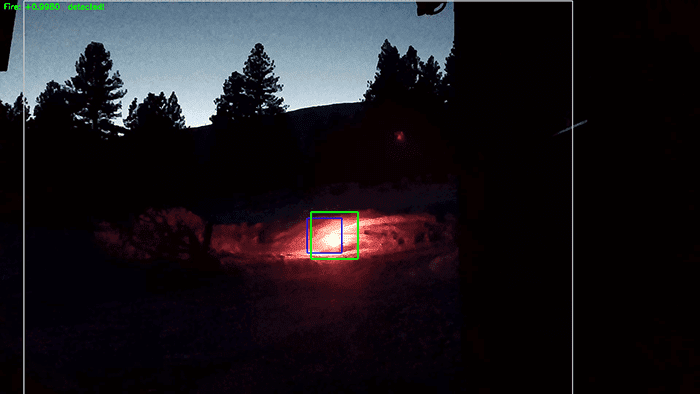

The optical algorithm then takes that candidate region and translates their coordinates to the optical camera’s coordinate system. Thus, by carefully looking at the candidate region, I can choose a good slice of the optical image suitable for passing to a well-trained binary classifier.

A big discovery (well, for me, it was big) I made was that while with a great dataset and a good network, you could get 96-97% accuracy with a full frame image if your classifier was looking at a well-chosen slice of an image the accuracy went up to over 99%. I suspect that with a carefully constructed ensemble, you could reach far higher accuracies.

If both the thermal algorithm and the classifier agree there is a fire, the system goes into an alarm state.

By itself, this system gets an accuracy of over 99.99% — this translates to a “mistake” every 3-5 days when operating in sample (outdoors). Out of sample (e.g., in my kitchen with a gas range) gives 4 or 5 mistakes per day. Higher accuracies would likely be possible with frame averaging or ensembles. And since the thermal images have a very low resolution (160×120) and frame rate (9 fps) most of the time, the system doesn’t have to work very hard to obtain those impressive results.

The approach I use is far from perfect and still struggles in some situations. Hot exhaust from internal combustion engines, especially heavy equipment or farm equipment, frequently confuses the thermal algorithm. The classifier struggles with brightly lit subjects and even more with brightly backlit subjects. Brightly colored birds close to the sensors have produced confusing results at times. These problems are being mitigated over time, often by collecting more representative training data.

I have applied for patents on many parts of this system, and on August 11, I was informed that those patents were allowed. After some more fees and paperwork, those patents will formally be issued and published, and then I can share more details about how the system works.

Adrian: What hardware does your fire detection system run on? Do you need a laptop/desktop, or are you using something like a Raspberry Pi, Jetson Nano, etc.?

David: The core fire detector runs on a Raspberry Pi. The reference implementation right now is a Pi 3B+ and works fine with detection times on the order of 1-2 seconds. As implemented right now, the system either connects to the internet through WiFi or using a cellular modem. My preference down the road is to use the cellular modem, as we can self-configure the system and have it up and running without any end-user setup.

I am booting the Pi read-only. This makes the system much more robust in the face of power outages and other failures, but it isn’t possible to save detection images directly on the SD card.

Other parts of this system will run on the cloud, and there will also be a client (either a web page or an app) that can show you the detectors deployed, where they are deployed, and what their status is.

Adrian: What is the hardest part of combining an infrared camera with “standard” image processing and OpenCV code? What roadblocks did you encounter?

David: The big obstacle for me was getting the hardware to work at all. There were many hardware barriers (including unsupported and deprecated parts) and a lot of obsolete code on GitHub that I had to work through. I finally found some halfway decent code that let me at least get started. For example, the normal Lepton breakout board uses I2C, and so you have a mess of wires connected to the GPIO bus on your Raspberry Pi. I got all that to work, but it wasn’t the best environment for exploring a better flame detection algorithm.

My rate of progress dramatically increased when I switched over to using a Purethermal 2 USB module. This was a huge improvement because, with very little effort, I could experiment with image processing algorithms for the thermal camera on a laptop. So rather than upload code to the Pi and reboot the Pi and look at another display to see the output, I could just have a code-test-debug cycle on my laptop and work on the software at my desk, on the kitchen table, or at a bakery. So I rapidly got a lot more time on the system and learned more in a month than I had in the previous six months.

Once you are talking to the hardware, the real work begins. The Lepton is a remarkably sensitive instrument, which has only 160×120 spatial resolution, but each pixel is 16 bits deep — a 1-bit change in pixel values represents a temperature change of approximately 0.05C. That is sensitive enough that if you walk barefoot on a cold floor, your footprints will “glow” in the thermal image for several minutes. On the other hand, a lot of opencv functions don’t really like a 16-bit grayscale image, so you need to be careful which functions you call, and you might need to use numpy for some operations.

The final thing that you need to watch out for is that thermal images have extremely low contrast. So, unless you enhance them somehow (usually normalizing a histogram is enough), you won’t be able to see anything interesting when you display the images.

Adrian: What do you think you learned from building the fire detection system?

David: Short answer: a lot!

I think the biggest takeaway (so far) is that you should expect to spend a lot of time building great datasets. You shouldn’t expect it to be easy, and you should expect that there will be a lot of trial and error and learning experiences along the way. However, once you start building a great dataset and have a framework in place that lets you continuously improve it, you are in a fantastic place.

In your writing, you talk a lot about how valuable a high-quality dataset is. That is one hundred percent true. However, I’d go further and say that you are creating something even more valuable if you build a framework and processes that let you easily grow and extend that dataset.

Adrian: What computer vision and deep learning tools, libraries, and packages did you utilize in building the fire detection system?

David: I used OpenCV, Tensorflow, Keras, the picamera module, and your imutils library.

Adrian: What are your next steps in the project?

David: We are doing two things right now: the first is we have a few prototypes, and we are getting time on them and learning both the limitations to the approach we are using and how to make it much better. At the same time, we are talking with potential customers, showing them what we have and also talking about what we have in mind, and trying to figure out how to solve their problems.

One big thing we have learned is that this whole idea of fire detectors works much better if you can deploy a lot of them (at least dozens, possibly hundreds) around a community. Then you can give people a web site or an app that lets them answer the question they care about: where is the fire?

Adrian: You’ve been a long-time reader and customer of PyImageSearch. Thank you for supporting us! (And an even bigger thank you for being a wonderfully helpful moderator in the community forums.) How has PyImageSearch helped you with your work and your company?

David: It greatly helps that I can get access to wiser and more experienced people (you and Sayak Paull have both been immensely helpful) who can help me out when I get stuck (when I switched over to Tensorflow 2.x and tf-data, I got all fouled up several times, and you and Sayak both made a huge difference in helping me puzzle out what went wrong).

The other thing I enjoy about participating in PyImageSearch is that helping others is a great way to sharpen my own skills and learn new things. So it has been a great experience all around for me.

Adrian: If a PyImageSearch reader wants to connect with you, how can they do so?

David: The best way to connect with me is on my LinkedIn at David Bonn.

Summary

Today we interviewed David Bonn, an expert in using computer vision to detect wildfires.

David has built a patented computer vision system that can successfully detect wildfires using:

- Raspberry Pi

- FLIR Lepton thermal camera

- Cellular modem

- Proprietary OpenCV and deep learning code

The system has already been used to detect wildfires and alert firefighters, preventing injury and loss of life.

Early wildfire detection is yet another example of how computer vision and deep learning are revolutionizing nearly every facet of our lives. David’s work demonstrates, as computer vision practitioners, how much our work can impact the world.

I wish David the best of luck as he continues to develop this system — it truly has the potential to save lives, help the environment, and prevent tremendous property damage.

To be notified when future tutorials and interviews are published here on PyImageSearch, simply enter your email address in the form below!

Join the PyImageSearch Newsletter and Grab My FREE 17-page Resource Guide PDF

Enter your email address below to join the PyImageSearch Newsletter and download my FREE 17-page Resource Guide PDF on Computer Vision, OpenCV, and Deep Learning.

Comment section

Hey, Adrian Rosebrock here, author and creator of PyImageSearch. While I love hearing from readers, a couple years ago I made the tough decision to no longer offer 1:1 help over blog post comments.

At the time I was receiving 200+ emails per day and another 100+ blog post comments. I simply did not have the time to moderate and respond to them all, and the sheer volume of requests was taking a toll on me.

Instead, my goal is to do the most good for the computer vision, deep learning, and OpenCV community at large by focusing my time on authoring high-quality blog posts, tutorials, and books/courses.

If you need help learning computer vision and deep learning, I suggest you refer to my full catalog of books and courses — they have helped tens of thousands of developers, students, and researchers just like yourself learn Computer Vision, Deep Learning, and OpenCV.

Click here to browse my full catalog.